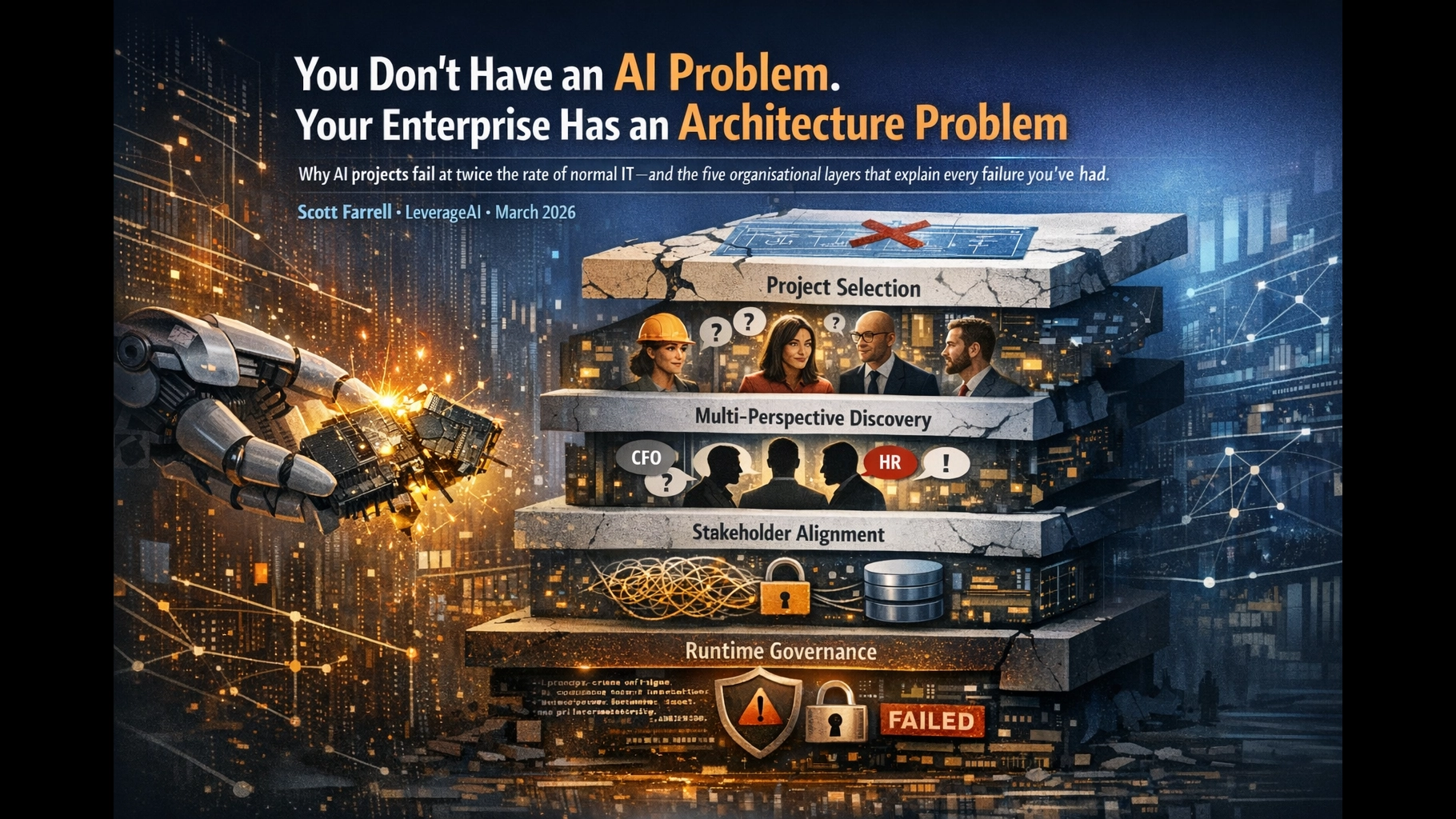

You Don’t Have an AI Problem. Your Enterprise Has an Architecture Problem.

Why AI projects fail at twice the rate of normal IT — and the five organisational layers that explain every failure you’ve had.

Scott Farrell · LeverageAI · March 2026

- AI projects fail at 80%+ rates — twice the rate of non-AI IT projects. The cause is organisational architecture, not model quality.

- Failure cascades across five layers: project selection, multi-perspective discovery, stakeholder alignment, cognitive architecture, and runtime governance. All five must be healthy simultaneously.

- Only 6% of organisations are AI high performers. They use the same models as everyone else. The difference is architecture.

The Most Expensive Misdiagnosis in Enterprise Technology

Your last AI project failed. You blamed the model. The vendor. The data. Maybe even the team.

You were wrong about all of it.

AI projects fail at more than double the rate of conventional IT projects.1 The percentage of companies abandoning most of their AI pilots soared to 42% by end of 2024, up from 17% the previous year.2 With global AI spending projected to reach $630 billion by 2028, we’re looking at hundreds of billions in wasted investment.3

And it’s getting worse, not better, despite dramatically more capable models.

That should tell you something. If the technology keeps improving but the failure rate keeps climbing, the technology was never the problem.

RAND Corporation’s peer-reviewed research — 65 interviews across the AI industry — identified the single most common root cause of AI project failure. It wasn’t hallucinations. It wasn’t data quality. It wasn’t compute.

“Industry stakeholders often misunderstand — or miscommunicate — what problem needs to be solved using AI. Misunderstandings and miscommunications about the intent and purpose of the project are the most common reasons for AI project failure.”

— RAND Corporation, 20241

MIT’s 2025 GenAI Divide study confirmed it from the other direction: 95% of enterprise AI pilots produce zero measurable P&L impact. The failure is attributed not to model quality but to “learning gaps” and workflow misalignment.4

The gap between potential and reality isn’t a technology gap. It’s an architecture gap.5

Not software architecture. Organisational architecture.

The Five Layers Where AI Actually Fails

After writing 26 articles and 15 ebooks on enterprise AI deployment, pattern-matching across dozens of client engagements, and synthesising research from McKinsey, RAND, BCG, MIT, and Deloitte, one thing has become clear:

AI failure isn’t one problem. It’s five problems masquerading as one.

Failure cascades across five organisational architecture layers. All five must be healthy simultaneously. Fix one layer, still fail at the next broken one. This is what separates the 6% of AI high performers6 from the 94%.

Project Selection

The Lane Doctrine

Most AI projects are doomed before a single line of code is written. Not because the technology can’t work, but because the project was selected wrong.

RAND’s research found that organisations frequently focus more on using the latest technology than on solving real problems for their intended users.1 Leaders, swayed by hype, deploy AI for problems better suited to traditional methods or overestimate its readiness for complex tasks.7

Here’s what we see repeatedly: the “simple” AI project (a customer chatbot) is actually the hardest. It’s real-time, customer-facing, high blast radius, and requires novel governance. Meanwhile, what seems “complex” (internal document processing, batch artefact generation) is actually where AI physics works in your favour.

We call this The Lane Doctrine: deploy AI where the physics supports it. Batch the brain. Ship the artefacts. Govern like software. When you test a proposed project against seven diagnostic questions — checking for latency constraints, blast radius, batch compatibility, and containment — most of the “obvious” first choices score poorly. The first idea is rarely the right idea.

“Does your AI project require sub-second responses to customers, with mistakes that are public, regulated, or irreversible? If yes, you’ve selected a boss fight — the constraints stack multiplicatively against you.”

Multi-Perspective Discovery

AI Think Tank

Even when the right type of project is selected, the specific problem is usually defined by a single department.

Operations sees “automate customer intake = save 2,200 hours per month.” Revenue sees “$300K expansion revenue at risk from lost upsell calls.” HR sees “attrition risk from removing meaningful work.” Net result: lose $300K to save $200K.

This is The First Idea Trap. One department’s idea gets approved without Revenue, Risk, or HR debate. When teams work in silos, they create proofs-of-concept that can’t be used in production — engineers do extra work to combine models, business users won’t use tools that don’t fit their workflows, and adoption stalls.8

The fix isn’t a committee. It’s structured adversarial thinking. Our AI Think Tank process runs every proposal through four specialised lenses — Operations, Revenue, Risk, and People — with explicit rebuttals and visible rejections. The mark of a reasoning partner isn’t what it recommends. It’s whether it can show you what it didn’t recommend and why.

McKinsey’s data supports this: high performers are more than three times more likely to say their organisation intends to use AI for transformative change, driven by senior leaders who demonstrate strong ownership of and commitment to AI initiatives.6

“Has your AI proposal been debated by a Revenue voice, a Risk voice, and an HR voice — not just the department that proposed it?”

Stakeholder Alignment

Three-Lens Framework · Enterprise AI Spectrum

You’ve selected the right project type. You’ve validated it through multiple lenses. Now you deploy — and it still dies.

Why? Because the CEO, CFO, and HR leader each have a different definition of success, a different tolerance for error, and no pre-negotiated agreement on either. The first visible mistake triggers what we call the one-error death spiral: the AI makes 15 mistakes out of 1,000 tasks (98.5% accuracy). An executive asks “How often does this happen?” No observability means no data. The project gets cancelled — despite possibly outperforming humans at their 3.8% error rate.

Technical success doesn’t predict organisational success.9 The 12% of AI projects that succeed don’t have better technology. They have better organisational alignment.10

This is compounded by maturity mismatch — deploying high-autonomy AI when organisational governance can’t support it. What organisations think is a “simple customer chatbot” (Level 2 complexity) is actually an autonomous customer interaction (Level 5-6 complexity). They have Level 1-2 governance maturity. No error budgets. No playbooks. No telemetry. The project was architecturally impossible before it launched.

The data confirms this pattern: high performers are 2.8x more likely to fundamentally redesign their workflows (56% vs. 20%) and 3x more likely to have senior leaders demonstrating strong ownership of AI initiatives (48% vs. 16%).11

“Have your CEO, CFO, and HR leader agreed — in writing — on the acceptable error rate for this AI system before deployment? If not, the first mistake will kill the project politically, regardless of technical performance.”

Cognitive Architecture

The Cognition Supply Chain

You’ve selected well. You’ve validated through multiple lenses. Your stakeholders are aligned. You deploy — and the AI produces generic, underwhelming output. “It’s not good enough,” someone says. “We need a better model.”

You almost certainly don’t.

“As large language models improve, they also converge. GPT, Claude, Gemini, and their peers now offer broadly similar capabilities. Launching a product using a state-of-the-art model is no longer a competitive advantage. What remains valuable — and defensible — is context.”

— Moody’s Analytics, 202512

When your product taxonomy labels the same item three different ways across three systems, the model doesn’t resolve the inconsistency — it inherits it. The output sounds authoritative but creates liability.5 The 73% failure rate of enterprise RAG deployments isn’t a model problem. It’s an architectural one.13

We call this The Cognition Supply Chain: a five-stage pipeline — Route, Explore, Judge, Compress, Compound — that converts raw organisational knowledge into domain-specific AI output. Most organisations are stuck at Level 2 of retrieval maturity: single-shot, chunk-level search. It produces the same quality as asking ChatGPT directly.

Here’s a simple diagnostic: ask your AI about blue whales, then ask it about your organisation. If the blue whales answer is dramatically better, you don’t have a model problem. You have a context architecture problem.

“Does your AI know more about blue whales than about your business? If yes, the model is fine. Your cognition supply chain is missing.”

Runtime Governance

Compliance Cosplay · Decision Authority Infrastructure

The final layer — and the one where the gap between ambition and readiness is most acute.

Deloitte’s March 2026 State of AI report found that nearly three-quarters of organisations plan to deploy autonomous AI agents — but only 21% have governance frameworks ready.14 That’s a 4:1 ambition-to-readiness gap on the layer where failure has the highest consequences.

Most AI governance today is what we call compliance cosplay — policies, dashboards, and audit logs that can explain a decision after the fact but cannot structurally prevent an unauthorised decision from executing before it occurs.

The test is simple: Can your system technically prevent an unauthorised AI decision from executing right now? Not “do you have a policy that says it should.” Can it block execution if authority, admissibility, or risk conditions aren’t met?

If the answer is no, you have governance theatre. And with the EU AI Act reaching full enforcement in August 2026 — penalties up to €15M or 3% of worldwide turnover — governance theatre is an increasingly expensive costume to wear.

Real governance is Decision Authority Infrastructure: a runtime system where models only suggest, and every suggestion hits an enforcement boundary that can prove what it relied on. Think of it as zero trust for decisions. Governance that runs with the decision, not after it.

“Can your system technically block an unauthorised AI decision from executing right now — or can it only tell you about it after the fact?”

The Cascade: Why Fixing One Layer Doesn’t Work

These five layers aren’t a menu. They’re a dependency chain. Each layer assumes the previous one is functioning:

Fix Selection only → Great project type, but one department’s blind spot dooms it (Layer 2 failure)

Fix Selection + Discovery → Right problem, right debate, but autonomy outpaces governance maturity (Layer 3 failure)

Fix Layers 1–3 → Aligned team, appropriate ambition, but poor retrieval = generic output = eroded confidence (Layer 4 failure)

Fix Layers 1–4 → Excellent output, no runtime governance = first audit question destroys the programme (Layer 5 failure)

Governance without alignment is bureaucracy. Alignment without discovery is consensus on the wrong problem. Discovery without selection is a well-debated boss fight. Each layer compounds the last.

Organisations cannot skip maturity stages. Each stage provides the foundation for the next.15 This isn’t a theoretical position — it’s the documented pattern behind every failure statistic in this article.

What the 6% Do Differently

McKinsey’s 2025 State of AI survey found that only 6% of organisations are AI high performers — achieving 5% or more EBIT impact and reporting significant value.6 BCG’s parallel study found the same pattern: only 5% generate substantial AI value, while 60% lag in developing critical AI capabilities.16

The high performers use the same models as everyone else. What they do differently is architectural:

- They redesign workflows, not just automate them. High performers are 2.8x more likely to fundamentally redesign workflows (56% vs. 20%).11

- They have senior ownership. 48% have senior leaders demonstrating strong AI commitment, vs. 16% of others.11

- They scale aggressively. High performers are 3x more likely to scale AI agents across functions.6

- They invest more. 35% allocate over 20% of their digital budget to AI. They expect twice the revenue increase and 1.4x greater cost reductions.16

- They treat governance as growth infrastructure. “For agentic AI, governance and growth go hand in hand.”17

The compounding advantage is real and it’s widening. BCG’s future-built companies show 1.7x higher revenue growth, 1.6x higher EBIT margin, and 3.6x greater three-year total shareholder return.16 This gap isn’t closing. It’s accelerating.

The Diagnosis

You don’t need a better model. You don’t need a bigger budget. You don’t need more pilots.

You need to diagnose which layer of your organisational architecture is broken — and stop investing in layers above it.

Five questions. One for each layer. Answer them honestly:

- Selection: Does your AI project deploy where the physics supports it — batch, artefact-based, containable — or are you running a boss fight?

- Discovery: Has the proposal been challenged by Revenue, Risk, and HR — or did one department define it alone?

- Alignment: Have your CEO, CFO, and HR leader agreed on autonomy level, error budgets, and success metrics — before a line of code was written?

- Cognition: Does your AI know your business as well as it knows blue whales?

- Governance: Can your system block an unauthorised AI decision right now — or only tell you about it later?

If you answered “no” to any of these, you’ve found your broken layer. And now you know why your AI projects keep failing.

It was never about the AI.

It was always about the architecture.

Take the 10-Minute Readiness Assessment · Schedule a Discovery Call

References

- [1]RAND Corporation. “Root Causes of Failure for Artificial Intelligence Projects.” 2024. — “More than 80 percent of AI projects fail — twice the rate of failure for information technology projects that do not involve AI.” rand.org/pubs/research_reports/RRA2680-1.html

- [2]S&P Global. Enterprise AI Survey, 2025. — “The percentage of companies abandoning most of their A.I. pilot projects soared to 42 percent by the end of 2024, up from 17 percent the previous year.” Reported via CIO Dive and LinkedIn.

- [3]IDC. AI Spending Forecast, 2025. — “With global AI spending projected to reach $630 billion by 2028.” Reported via Quest Blog: blog.quest.com/the-hidden-ai-tax-why-theres-an-80-ai-project-failure-rate/

- [4]MIT NANDA Initiative. “The GenAI Divide: State of AI in Business 2025.” — “95% of enterprise generative AI pilots produce no measurable profit-and-loss impact.” mlq.ai/media/quarterly_decks/v0.1_State_of_AI_in_Business_2025_Report.pdf

- [5]Earley Information Science. “Why Enterprise AI Stalls.” 2025. — “The gap between potential and reality isn’t a technology gap. It’s an architecture gap.” earley.com/insights/iwhy-enterprise-ai-stalls-semantic-infrastructure

- [6]McKinsey & Company. “The State of AI in 2025: Agents, Innovation, and Transformation.” November 2025. — “AI high performers, representing about 6 percent of respondents, report pushing for transformative innovation via AI, redesigning workflows, scaling faster.” mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai

- [7]Timspark. “Why AI Projects Fail (95% in 2025).” — “Leaders, swayed by hype, often deploy AI for problems better suited to traditional methods.” timspark.com/blog/why-ai-projects-fail-artificial-intelligence-failures/

- [8]FutureAGI. “Top 6 Reasons Enterprise AI Projects Fail.” 2025. — “When teams work in silos, they don’t understand the requirements and create proofs-of-concept that can’t be used in production.” futureagi.com/blogs/reason-enterprise-ai-project-fail-2025

- [9]LinkedIn/JAIS. “AI Projects That Succeed Technically and Fail Organizationally.” — “Technical success doesn’t predict organizational success.” linkedin.com/pulse/ai-projects-succeed-technically-fail-organizationally-andre-r9wue

- [10]Rightpoint. “Escaping AI Pilot Purgatory.” 2025. — “The 12% of AI projects that succeed don’t have better technology. They have better organizational alignment.” rightpoint.com/thought/article/escaping-ai-pilot-purgatory

- [11]McKinsey & Company. “The State of AI in 2025.” — “High performers are 2.8x more likely to fundamentally redesign their workflows (56% vs. 20%). 48% of high performers have senior leaders demonstrating strong ownership, compared to 16% of others.” Via Tyler Jewell summary, LinkedIn.

- [12]Moody’s Analytics. “Beyond Prompts — Why Enterprise AI Demands Context Engineering.” 2025. — “Launching a product using a state-of-the-art model is no longer a competitive advantage. What remains valuable — and defensible — is context.” moodys.com/web/en/us/creditview/blog/beyond-prompts-why-enterprise-ai-demands-context-engineering.html

- [13]Medium/Codex. “14 Distinct Failure Patterns That Plague Production RAG Systems.” 2025. — “The 73% failure rate of enterprise RAG deployments isn’t a model problem — it’s an architectural one.” medium.com/codex/14-distinct-failure-patterns-that-plague-production-rag-systems

- [14]Deloitte. “State of AI in the Enterprise, 2026.” March 2026. — “Nearly three quarters of organizations plan to deploy autonomous agents within the next couple of years, but only 21% report having the proper governance in place.” deloitte.com/us/en/what-we-do/capabilities/applied-artificial-intelligence/content/state-of-ai-in-the-enterprise.html

- [15]Agility at Scale. “Enterprise AI Agent Maturity Model.” March 2026. — “Organizations cannot skip maturity stages. Each stage provides the foundation for the next.” agility-at-scale.com/ai/agents/enterprise-ai-agent-maturity-model/

- [16]BCG. “The Widening AI Value Gap — Build for the Future 2025.” — “Only 5% of companies get substantial value from AI. Future-built companies: 1.7x higher revenue growth, 1.6x higher EBIT margin, 3.6x greater three-year TSR.” media-publications.bcg.com/The-Widening-AI-Value-Gap-Sept-2025.pdf

- [17]Deloitte. “State of AI in the Enterprise, 2026.” — “For Agentic AI, governance and growth go hand in hand. Companies seeing the most success are taking a measured approach — starting with lower-risk use cases, building governance capabilities, and scaling deliberately.” deloitte.com/us/en/about/press-room/state-of-ai-report-2026.html

Discover more from Leverage AI for your business

Subscribe to get the latest posts sent to your email.

Previous Post

AI Governance Means Signing the Authority, the Data, and the Graph