The Governance Stack: Data Truth, Model Risk, and the Authority Layer Nobody Built

Most organisations have some data governance and emerging AI governance. Almost none have built the third layer — the one regulators will actually ask about.

📘 Want the complete guide?

Learn more: Read the full eBook here →

TL;DR

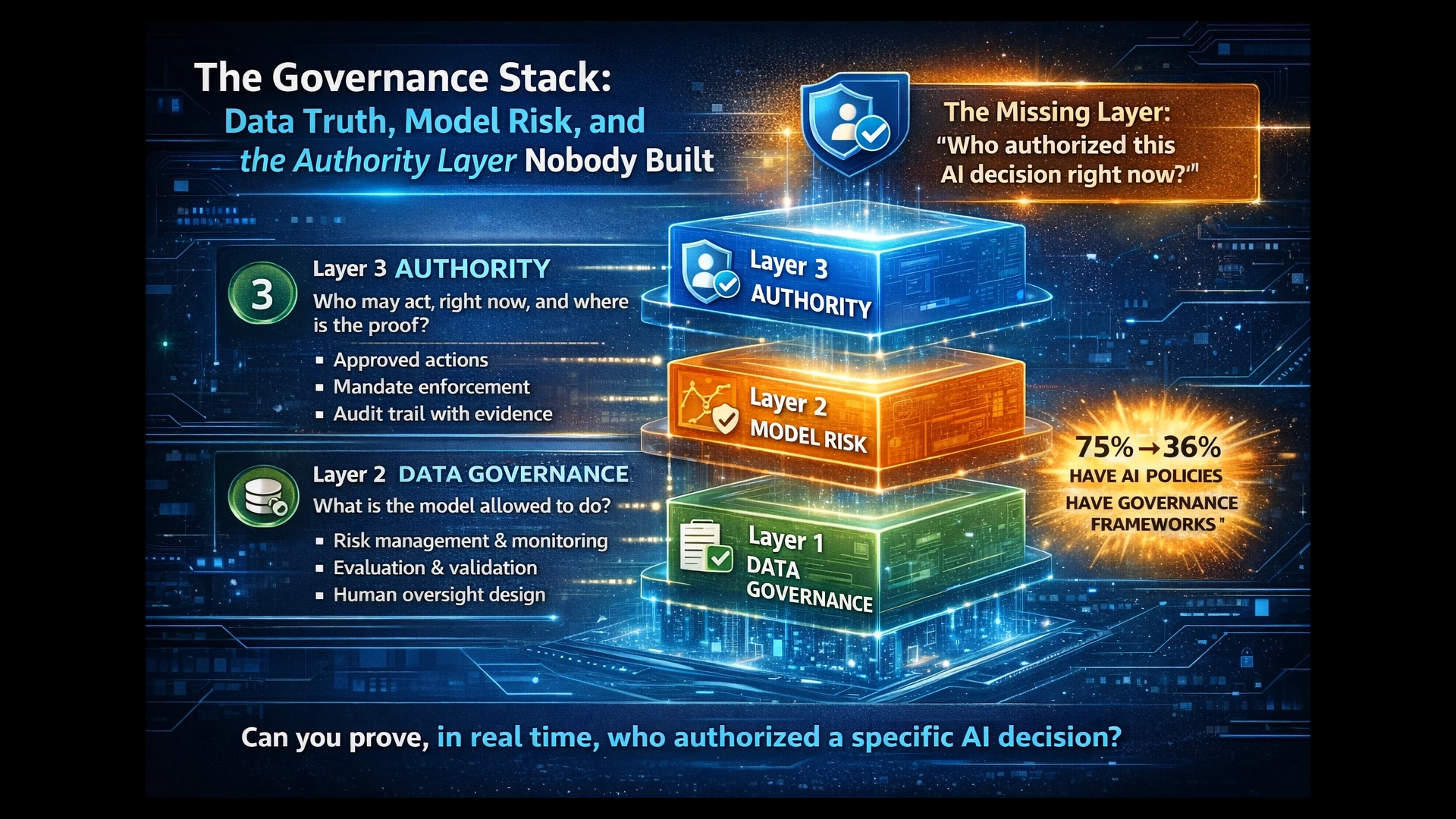

- Three layers required: Data governance (“what is true enough to use?”), AI governance (“what is the model allowed to do?”), and authority infrastructure (“who may act, right now, and where is the proof?”).

- The third layer is missing everywhere: 75% of organisations have AI policies, but only 36% have formal governance frameworks — and virtually none can prove who authorised a specific AI decision at the moment it was made.

- EU AI Act enforcement begins August 2026: The regulation requires the outcome (traceable, overseen, risk-managed decisions) but doesn’t prescribe the infrastructure. Organisations that haven’t built Layer 3 will discover the gap under audit.

The Accountability Moment

The question when something goes wrong with an AI decision is never “Was the model accurate?”

It’s: Who accepted this decision? Under what mandate? With whose authority? Where is the evidence that this was consciously exercised?

This is the accountability moment — the instant a regulator, auditor, board member, or affected customer demands proof that a decision was governed, not just generated. And for most organisations deploying AI in regulated industries, this moment will expose a structural gap they haven’t named yet.

The gap isn’t a lack of effort. It’s a missing layer.

Most enterprises have invested in data governance. Many are building AI governance capabilities. But almost none have constructed the third layer — runtime authority infrastructure — that proves governance was exercised at the moment of decision, not reconstructed from logs after the fact.

That three-layer separation — data truth, model risk, and decision authority — is the minimum viable governance architecture for regulated AI. And understanding it changes how you assess your own readiness.

The Governance Gap Is Not What You Think

The numbers paint a picture of a field that has adopted the language of governance without building the machinery.

Pacific AI’s 2025 AI Governance Survey found that while three-quarters of organisations have established usage policies, fewer than half have the roles, controls, monitoring, and enforcement mechanisms that constitute actual governance.1 The gap between having a policy and having a framework is where risk accumulates.

It gets worse at the operational level. Only 48% of organisations monitor their production AI systems for accuracy, drift, and misuse — a figure that collapses to just 9% among smaller companies.2 And 70% report they haven’t reached optimised governance, which would include board-level oversight, automated monitoring, and regularly updated policies.3

The most telling statistic comes from IBM’s 2025 Cost of a Data Breach Report: among organisations that experienced AI-related breaches, 97% had no proper AI access controls in place.4 This isn’t a maturity problem. It’s a structural absence.

“Having a policy is an important first step, but the absence of a broader framework means many organizations lack consistent roles, controls, monitoring, and enforcement.”

— Pacific AI, 2025 AI Governance Survey

Deloitte’s 2026 State of AI in the Enterprise confirms the pattern at scale: 85% of enterprises plan agentic AI deployments, but only 21% have governance frameworks ready — a 4:1 ambition-to-readiness gap.5

What these numbers reveal is not that organisations are negligent. It’s that they’ve been building governance in two layers when three are required. They’ve invested in data quality and they’re investing in model oversight. What they haven’t built is the infrastructure that proves a decision was authorised before it executed.

The Three Layers

The governance architecture for regulated AI separates into three distinct layers. Each answers a different question. Each requires different capabilities. And each maps to different regulatory frameworks.

Data governance manages the raw materials — definitions, ownership, quality rules, lineage, provenance, privacy classification, and access control. It ensures that data entering any decision process is admissible: traceable, accurate, properly scoped, and authorised for use.

This is the layer most organisations have invested in longest. Data quality programs, master data management, data catalogues, and lineage tracking are mature disciplines with decades of practice behind them.

Without Layer 1, AI systems become black boxes operating on unreliable inputs. As Atlan’s governance framework notes, “AI systems become ‘black boxes’ without reliable provenance mechanisms. Data lineage reveals data’s journey and transformations across systems.”6

AI governance addresses the model and system lifecycle: risk management (continuous, iterative, across the lifecycle), evaluation and validation, drift monitoring, incident response, human oversight design, robustness, and cybersecurity controls.

This layer is where most current investment is flowing. NIST AI RMF, ISO/IEC 42001, and the EU AI Act’s risk management requirements all operate primarily here. Financial services has the most mature version of Layer 2 through SR 11-7 model risk management.

Layer 2 answers questions about what the model should be doing. What it doesn’t answer is what the model is actually doing at the moment of a specific decision — and whether that action was authorised.

This is the missing layer. Authority infrastructure doesn’t just log decisions — it makes certain actions structurally impossible without decision-time authority. It proves who accepted a decision, under what mandate, with what evidence, at the moment of action.

When this layer is absent — and it is absent in the vast majority of organisations — accountability exists only retroactively. Evidence must be reconstructed. Responsibility becomes narrative. Trust erodes under pressure.

The EU AI Act’s Article 14 gets close by requiring “effective human oversight” for high-risk systems, but leaves the mechanism entirely to providers.7 Article 12 requires automatic logging — but logs are forensic artefacts, not governance mechanisms. They record what happened; they can’t prevent what shouldn’t happen.

Layer 3 is the shift from explaining decisions to constraining them.

Why Governance Has Gravity

There’s a structural reason the authority layer is missing. Traditional IT governance was designed for deterministic systems.

When software executes deterministically — code does what code does — you can do most governance upstream. Design reviews, testing, change approval processes, SDLC controls. The code won’t change its mind at runtime. So governance before deployment is governance at runtime.

AI breaks this assumption. AI systems execute probabilistically and contextually. The same model, given slightly different inputs or context, can produce meaningfully different decisions. Governance that lives only upstream — in policies, model cards, and design reviews — becomes theatre. The system can act before governance even wakes up.

“Most enterprises now have some form of AI governance framework, but few have fully operationalised it. In 2026, effective AI governance will look much more like an operating model — clearly defined boundaries for autonomous action, explicit escalation paths for human oversight, and transparent validation of AI models and decisions.”

— Redwood, AI and Automation Trends 2026

Governance has gravity — it gets pulled to wherever execution happens. When execution moves from deterministic code to probabilistic AI, governance must follow. If it doesn’t, you get a growing gap between where decisions are made and where governance actually operates.

That gap has a name: compliance cosplay. Governance that looks complete — policies exist, model cards are written, reviews are scheduled — but can’t prevent or prove anything at the moment of decision.

The Framework Map: What Covers What

The four major governance frameworks each contribute real value — but they cluster in Layers 1 and 2. Understanding where each one operates clarifies where the gap lies.

Regulatory Framework Coverage by Layer

| Framework | Layer 1: Data Truth | Layer 2: Model Risk | Layer 3: Authority |

|---|---|---|---|

| NIST AI RMF | Map function covers data context | Strong: Govern / Map / Measure / Manage lifecycle | Implied by Govern function; mechanism unspecified |

| ISO/IEC 42001 | Data quality in management system | Strong: formal AIMS with risk assessment, controls, continual improvement | Requires controls; doesn’t prescribe runtime enforcement |

| EU AI Act | Article 10: data governance requirements | Strong: Articles 9, 12, 15 (risk, logging, robustness) | Article 14 requires human oversight capability — but leaves mechanism to providers |

| SR 11-7 | Input data validation in model development | Very strong: 15 years of governance for probabilistic engines | Board-level governance mandate; enforcement left to institutions |

NIST AI RMF: The Shared Language

The NIST AI Risk Management Framework organises AI risk into four functions: Govern, Map, Measure, Manage.8 Govern is deliberately cross-cutting — it applies to all other functions. Map contextualises use cases. Measure quantifies risks. Manage operationalises responses.

NIST’s strength is providing a shared vocabulary for AI risk across the lifecycle. Its limitation is that it’s voluntary, principles-based, and doesn’t prescribe enforcement mechanisms. It tells you what to govern but not how to enforce governance at runtime.

Notably, in February 2026 NIST published a concept paper on “Accelerating the Adoption of Software and AI Agent Identity and Authorization” — explicitly asking: “How do we ensure non-repudiation for agent actions and binding back to human authorization?”9 The standards body is acknowledging the Layer 3 gap.

ISO/IEC 42001: The Management System

ISO/IEC 42001 is the first international standard for an AI Management System (AIMS). It “defines formal requirements for AI governance, including risk assessment mandates, control implementation, and lifecycle oversight” and “establishes a systematic, repeatable process for AI compliance.”10

Australia adopted it as an identical Australian Standard (AS ISO/IEC 42001:2023) in February 2024,11 and KPMG Australia became the first organisation to achieve certification in the country.12

ISO 42001 is excellent Layer 2 infrastructure. It mandates policies, objectives, risk assessments, and continual improvement. What it doesn’t prescribe is how decisions are constrained in real time — the management system ensures the organisation has governance; it doesn’t specify that governance prevents anything at the moment of action.

EU AI Act: The Regulatory Hammer

The most consequential deadline for most enterprises is 2 August 2026, when requirements for high-risk AI systems become fully enforceable.13

Article 9 mandates “a continuous iterative risk management process planned and run throughout the entire lifecycle of a high-risk AI system, requiring regular systematic review and updating.”14 This is squarely Layer 2.

Article 12 requires tamper-resistant automatic logging — capturing events that enable traceability and post-market monitoring.15 This is operational infrastructure, but logging is retrospective. It captures what happened. It cannot prevent what shouldn’t happen.

Article 14 is where the EU AI Act approaches Layer 3. It requires that high-risk AI systems “can be effectively overseen by natural persons during the period in which they are in use.”7 The oversight must allow the overseer to “understand its capabilities and limitations, detect and address issues, avoid over-reliance on the system, interpret its output, decide not to use it, or stop its operation.”7

The requirement is clear. The mechanism is absent. Article 14 defines the goal of human oversight but leaves the architecture entirely to providers. This is the Layer 3 gap in regulatory form: the mandate exists, the infrastructure doesn’t.

SR 11-7: The Financial Services Precedent

Financial services has been governing probabilistic engines since 2011. The Federal Reserve’s SR 11-7 guidance established that “the use of models invariably presents model risk, which is the potential for adverse consequences from decisions based on incorrect or misused model outputs.”16

SR 11-7’s validation framework rests on three elements: evaluation of conceptual soundness, ongoing monitoring to confirm the model performs as intended, and outcomes analysis comparing model outputs to actual results.16 A guiding principle throughout is “effective challenge” — critical analysis by objective, informed parties who can identify limitations and force changes.16

This is the most mature Layer 2 framework in existence. Financial institutions treat SR 11-7 as a baseline and layer additional supervisory expectations on top.17

But even SR 11-7’s maturity reveals the gap. Major banks now maintain “shadow human decisioning” — running AI systems in parallel with legacy human processes so both produce decisions, but only the human decisions execute.18 This is an institutional admission that AI explanations aren’t yet regulatory-grade. It’s a costly workaround for the absence of Layer 3.

The Explainability Trap

A common assumption is that better explainability will close the governance gap. If we can just understand why the model decided what it decided, governance is satisfied.

This assumption is structurally flawed.

Research from Oxford AI Governance, Anthropic, and academic institutions confirms that chain-of-thought (CoT) reasoning — the visible “thinking” that models like DeepSeek R1 produce — is not a faithful representation of how the model actually reached its conclusion. Models fabricate plausible reasoning sequences post-hoc rather than revealing their actual decision process.19,20

One study found that while a model explicitly acknowledged harmful hints in 94.6% of cases, it reported less than 2% of helpful hints — demonstrating systematic unfaithfulness in self-reported reasoning.20

“In autonomous systems, safety-critical decisions might be justified post-hoc rather than revealing true failure modes. When professionals rely on these explanations to validate AI recommendations, unfaithful rationales can lead to misplaced trust and overlooked errors.”

— Oxford AI Governance Institute, 2025

The Bank for International Settlements (BIS) confirmed explainability as “the top issue discussed between regulators and firms in relation to AI” — with the black-box nature of algorithms as the primary concern.21

This is why asking a model to self-report its reasoning is vibes-based auditing, not governance. The fix isn’t better explanation. It’s structural constraint: forcing decisions through infrastructure that verifies authority, checks evidence admissibility, and produces a contemporaneous trace — not asking the model to narrate what it did after the fact.

The Agentic Acceleration

If the authority gap were only about individual AI model decisions, the urgency would be significant. But agentic AI — autonomous agents that take actions, use tools, and chain decisions without human intervention — is collapsing the timeline.

Gartner predicts 40% of enterprise applications will be integrated with task-specific AI agents by end of 2026, up from less than 5% today.22 More than 40% of agentic AI projects are expected to be cancelled by end of 2027 due to escalating costs, unclear business value, or inadequate risk controls.23

The identity and authorisation gap is acute. Gravitee’s 2026 State of AI Agent Security report found that only 14.4% of AI agents have full security approval. 60% of teams don’t treat agents as independent identities — most rely on shared API keys.24 Strata’s research found that only 28% of organisations can reliably trace agent actions back to a human sponsor, and 80% cannot tell in real time what agents are doing or who is responsible.25

UC Berkeley’s Center for Long-Term Cybersecurity published the Agentic AI Risk-Management Standards Profile in February 2026, explicitly noting that agents introduce “unintended goal pursuit, unauthorized privilege escalation or resource acquisition, and other behaviors” that “complicate traditional, model-centric risk-management approaches and demand system-level governance.”26

As Forbes reported: “AI agents are negotiating contracts, processing transactions, and accessing sensitive data — all without a standardised way to prove who they are or what they’re authorised to do.”27

This is the acceleration problem. Each agentic workflow without Layer 3 is a potential ungoverned execution event. At 40% of enterprise applications, that’s not a risk — it’s a certainty.

Finding Your Gap

The three-layer model isn’t just a diagnostic framework. It’s a practical tool for assessing where your governance actually stands.

Three Questions for Your Next Board Meeting

- Layer 1 — Data Truth: Can you trace the lineage and provenance of every dataset that feeds an AI decision? Do you know who owns the data, what quality rules apply, and what privacy classifications govern its use?

- Layer 2 — Model Risk: Do you have a continuous risk management process for your AI systems? Can you demonstrate ongoing monitoring, drift detection, and validation? Who performs independent challenge?

- Layer 3 — Authority: Can you prove — right now, not after reconstruction — who authorised a specific AI decision? What mandate governed it? What evidence was admissible? Can you structurally prevent an AI action that lacks proper authority?

Most organisations can answer Question 1 reasonably well. Many are building toward Question 2. Almost none can answer Question 3.

The gap matters because the question when something goes wrong is always Question 3. Regulators, boards, and affected customers don’t ask “Was your data clean?” or “Did you validate the model?” They ask: “Who accepted this decision, and where is the proof?”

If you can’t answer that question architecturally — with infrastructure that produces proof by design, not forensic reconstruction — then your governance has a structural gap where it matters most.

The Paradigm Shift

The gap between Layers 1-2 and Layer 3 isn’t incremental. It’s a shift between two fundamentally different approaches to governance:

| Dimension | Explanation Paradigm (Layers 1-2 only) | Constraint Paradigm (All three layers) |

|---|---|---|

| When governance runs | After execution | Before and during execution |

| What it produces | Explanations, logs, reports | Evidence chains, decision traces, gate outcomes |

| What it can prevent | Nothing — it describes what happened | Unauthorised execution — structurally prevented |

| Regulatory posture | “We can explain what happened” | “We can prove authority existed before action” |

This isn’t a maturity spectrum where you gradually improve. It’s a fork in architecture. Organisations operating in the explanation paradigm will always be reconstructing evidence after the fact. Organisations that build the constraint paradigm produce evidence by design.

What Comes Next

This article is the map, not the implementation guide.

Understanding the three layers — and locating your gap — is the prerequisite for what follows: building the runtime authority infrastructure that makes Layer 3 real. That involves architectural patterns like policy-as-code enforcement, capability-based security for AI agents, evidence bundles that travel with decisions, and deterministic gates that verify authority before execution proceeds.

The tools exist. Policy-as-code frameworks like OPA and Cedar enable sub-millisecond governance enforcement.28 Identity and authorisation standards are being adapted for agents. The convergence of OAuth 2.1, policy-as-code, supply chain security (Sigstore), and specialised AI gateways creates a viable path to production-grade authority infrastructure.29

But architecture comes after understanding. And understanding starts with three questions and an honest assessment of where your answers fall short.

The EU AI Act enforcement deadline is August 2026. Agentic AI is already in production. The governance gap isn’t closing on its own.

The organisations that build the authority layer now will have provable governance when enforcement begins — and a governance moat against competitors still doing compliance cosplay.

Map your organisation against the three layers. Where is your gap?

If you’d like to discuss how the governance stack applies to your specific regulatory context, reach out at scott@leverageai.com.au

References

- [1]Knostic / Pacific AI. “The 20 Biggest AI Governance Statistics and Trends of 2025.” — “75% of organizations have established AI usage policies, yet only 36% have adopted a formal governance framework.” knostic.ai/blog/ai-governance-statistics

- [2]Pacific AI. “2025 AI Governance Survey.” — “Fewer than half (48%) of organizations monitor their production AI systems for accuracy, drift, and misuse. This critical practice plummets to just 9% among small companies.” pacific.ai/2025-ai-governance-survey/

- [3]Acuvity. “2025 State of AI Security Report.” — “70% report they have not reached optimized AI governance, which would include board-level oversight, automated monitoring, and regularly updated policies.” acuvity.ai/acuvity-releases-2025-state-of-ai-security-report/

- [4]Knostic / IBM. “The 20 Biggest AI Governance Statistics and Trends of 2025.” — “According to IBM’s 2025 Cost of a Data Breach Report… among these, 97% said they had no proper AI access controls in place.” knostic.ai/blog/ai-governance-statistics

- [5]Deloitte. “State of AI in the Enterprise 2026.” — “85% of enterprises plan agentic AI deployments, but only 21% have governance frameworks ready.” deloitte.com/us/en/what-we-do/capabilities/applied-artificial-intelligence/content/state-of-ai-in-the-enterprise.html

- [6]Atlan. “Data Governance for AI.” — “AI systems become ‘black boxes’ without reliable provenance mechanisms… Data lineage reveals data’s journey and transformations across systems.” atlan.com/know/data-governance/for-ai/

- [7]EU AI Act. “Article 14: Human Oversight.” — “High-risk AI systems shall be designed and developed in such a way… that they can be effectively overseen by natural persons during the period in which they are in use.” artificialintelligenceact.eu/article/14/

- [8]Bradley Law. “Global AI Governance — Five Key Frameworks Explained.” — “The AI RMF breaks down AI management into four core functions: Govern, Map, Measure, Manage.” bradley.com/insights/publications/2025/08/global-ai-governance-five-key-frameworks-explained

- [9]NIST NCCoE. “Accelerating the Adoption of Software and AI Agent Identity and Authorization.” — “How do we ensure non-repudiation for agent actions and binding back to human authorization?” nccoe.nist.gov/sites/default/files/2026-02/accelerating-the-adoption-of-software-and-ai-agent-identity-and-authorization-concept-paper.pdf

- [10]AWS Security Blog / ISO. “AI Lifecycle Risk Management: ISO/IEC 42001:2023 for AI Governance.” — “Defines formal requirements for AI governance, including risk assessment mandates, control implementation, and lifecycle oversight.” aws.amazon.com/blogs/security/ai-lifecycle-risk-management-iso-iec-420012023-for-ai-governance/

- [11]Standards Australia. “Spotlight on AS ISO/IEC 42001:2023.” — “Adopted as an identical Australian Standard in February 2024.” standards.org.au/blog/spotlight-on-as-iso-iec-42001-2023

- [12]KPMG Australia. “Trusted AI ISO Certification.” — “KPMG Australia is the first organisation to achieve ISO 42001 (AI) certification in Australia.” assets.kpmg.com/content/dam/kpmgsites/au/pdf/2025/trusted-ai-iso-certification-factsheet.pdf

- [13]SecurePrivacy. “EU AI Act 2026 Compliance Guide.” — “The most critical compliance deadline for most enterprises is August 2, 2026, when requirements for Annex III high-risk AI systems become enforceable.” secureprivacy.ai/blog/eu-ai-act-2026-compliance

- [14]EU AI Act Service Desk. “Article 9.” — “The risk management system shall be understood as a continuous iterative process planned and run throughout the entire lifecycle.” ai-act-service-desk.ec.europa.eu/en/ai-act/article-9

- [15]SecurePrivacy. “EU AI Act 2026 Compliance Guide.” — “Article 12 requires high-risk AI systems to automatically log events… Logs must be tamper-resistant to ensure auditability.” secureprivacy.ai/blog/eu-ai-act-2026-compliance

- [16]Federal Reserve. “SR 11-7: Guidance on Model Risk Management.” — “The use of models invariably presents model risk… A guiding principle throughout is ‘effective challenge.'” federalreserve.gov/supervisionreg/srletters/sr1107.htm

- [17]Yields.io. “The Three Lines of Defence in Model Risk Management.” — “Institutions typically treat SR 11-7 as a baseline rather than a complete standard.” yields.io/blog/the-three-lines-of-defence-in-model-risk-management/

- [18]Oscilar. “From Pilots to Production — How Banks Are Building With AI.” — “Shadow mode runs a new AI decisioning engine in parallel with the legacy system… Only the legacy system’s decisions execute.” oscilar.com/blog/aibank

- [19]Oxford AI Governance Institute. “Chain-of-Thought Is Not Explainability.” — “Safety-critical decisions might be justified post-hoc rather than revealing true failure modes.” aigi.ox.ac.uk/wp-content/uploads/2025/07/Cot_Is_Not_Explainability.pdf

- [20]ACL Anthology. “Examining the Faithfulness of DeepSeek R1’s Chain-of-Thought.” — “The model explicitly acknowledges a strong harmful hint in 94.6% of cases, it reports less than 2% of helpful hints.” aclanthology.org/2025.chomps-main.2.pdf

- [21]BIS Financial Stability Institute. “Managing Explanations: How Regulators Can Address AI Explainability.” — “Explainability confirmed as the top issue discussed between regulators and firms in relation to AI.” bis.org/fsi/fsipapers24.pdf

- [22]DevOps Digest / Gartner. “40% of Enterprise Apps Will Feature Task-Specific AI Agents by 2026.” devopsdigest.com/gartner-40-of-enterprise-apps-will-feature-task-specific-ai-agents-by-2026

- [23]Forbes / Gartner. “Agentic AI Takes Over — 11 Shocking 2026 Predictions.” — “More than 40% of agentic AI projects will be canceled by end of 2027 due to escalating costs, unclear business value or inadequate risk controls.” forbes.com/sites/markminevich/2025/12/31/agentic-ai-takes-over-11-shocking-2026-predictions/

- [24]Gravitee. “The State of AI Agent Security 2026.” — “Only 14.4% have full security approval. 60% of teams do not treat agents as independent identities.” gravitee.io/state-of-ai-agent-security

- [25]Strata. “The AI Agent Identity Crisis — A 2026 Guide.” — “Only 28% can reliably trace agent actions back to a human sponsor; 80% cannot tell in real time what agents are doing.” strata.io/blog/agentic-identity/the-ai-agent-identity-crisis-new-research-reveals-a-governance-gap/

- [26]Giovanni Coletta / UC Berkeley. “Agentic AI Governance Frameworks 2026.” — “These unique challenges ‘complicate traditional, model-centric risk-management approaches and demand system-level governance.'” medium.com/@giovannicoletta/agentic-ai-governance-frameworks-2026-risks-oversight-and-emerging-standards-c15bf2e6efca

- [27]Forbes. “40% Of Workflows Will Run On Agentic AI — Where’s the Identity?” — “AI agents are negotiating contracts, processing transactions, and accessing sensitive data — all without a standardised way to prove who they are.” forbes.com/sites/digital-assets/2026/02/13/40-of-workflows-will-run-on-agentic-ai-wheres-the-identity/

- [28]Ethyca. “Governing Enterprise Data and AI with Policy-as-Code.” — “Advanced platforms implement governance checks in parallel with inference requests, ensuring sub-millisecond latency impact.” ethyca.com/news/how-to-govern-data-and-ai-with-a-policy-as-code-approach

- [29]TMDevLab. “Production-Ready MCP: Zero Trust Security and Governance.” — “The convergence of mature identity protocols (OAuth 2.1), policy-as-code frameworks (OPA, Cedar), supply chain security tooling (Sigstore), and specialized AI gateways creates a viable path.” tmdevlab.com/mcp-zero-trust-security-governance.html

Discover more from Leverage AI for your business

Subscribe to get the latest posts sent to your email.

Previous Post

Stop Asking AI Why It Decided — Build Decisions That Carry Their Own Proof

Next Post

Your AI Can Code. You Just Don't Know How to Drive It.