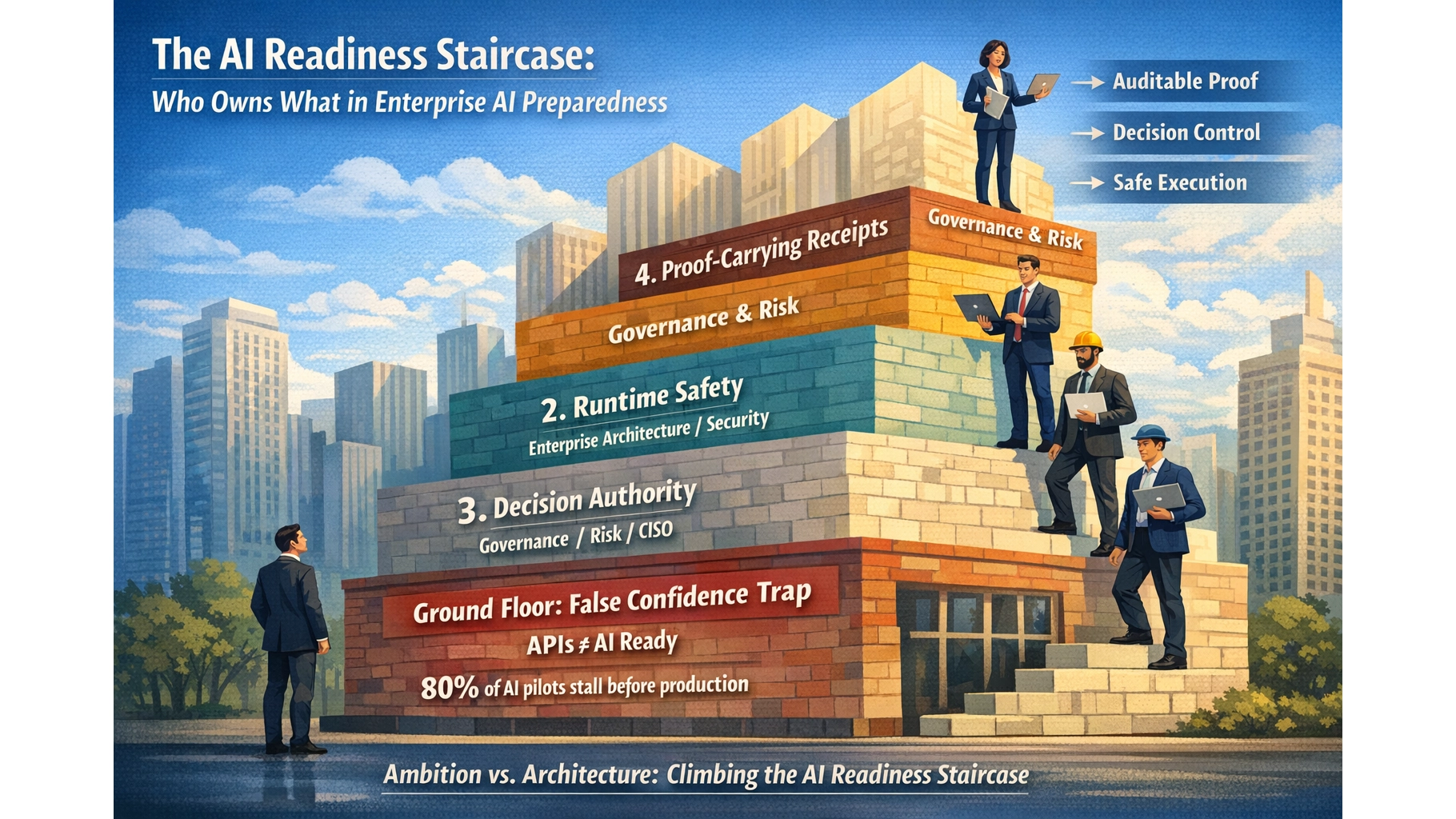

The AI Readiness Staircase: Who Owns What in Enterprise AI Preparedness

Most organisations think they’re AI-ready because their apps have APIs. They’re on the ground floor of a four-storey building.

📘 Want the complete guide?

Learn more: Read the full eBook here →

- Enterprise AI readiness has four stacked layers: application fitness, runtime safety, decision-time authority, and proof-carrying receipts. Most organisations complete one and declare victory.

- Each layer requires distinct architectural machinery and a distinct owner. Confusing layers or leaving ownership ambiguous is the primary reason AI programmes stall.

- The portfolio steward, enterprise architect, governance/risk function, and project delivery each own different layers. Mapping this ownership is the unlock most enterprises are missing.

The False Confidence Trap

Eighty-five per cent of enterprises plan to deploy agentic AI within two years. Only 21 per cent have governance frameworks mature enough to support it.1

That is not an awareness gap. Executives know AI matters. It is an architectural gap — a four-to-one ratio between ambition and readiness that no amount of strategy decks will close.

The problem is not that organisations are doing nothing. Many have assessed their data, audited their applications, formed committees, written policies, and funded pilots. The problem is that they confuse completing one dimension of readiness with being ready across all of them.

This is the false confidence trap. An organisation aces its application audit, builds a working pilot, demonstrates value in a sandbox — and then discovers it has no runtime authority model, no decision-time enforcement, no evidence infrastructure, and no clear ownership of any of those gaps.

Most AI readiness assessments in the market stop at culture, data, and maybe APIs. Cisco’s own AI Readiness Index found that global readiness actually declined from 2023 to 2024, with only 13 per cent of companies fully ready — even as 98 per cent reported increased urgency.3 Urgency is rising. Readiness is falling. That is the hallmark of organisations confusing desire with architecture.

The corrective is not more urgency. It is a better model of what “ready” actually means.

The Staircase: Four Layers of AI Readiness

Enterprise AI readiness is not a single checkbox. It is a staircase with four architectural layers, each building on the one below. Skipping a layer does not save time. It creates a predictable failure mode that surfaces later, usually at the worst possible moment.

The layers are not arbitrary. They reflect a dependency chain. You cannot safely run agents (Layer 2) against systems that are not machine-legible (Layer 1). You cannot enforce decision-time authority (Layer 3) without a runtime control plane (Layer 2). And you cannot produce proof-carrying receipts (Layer 4) unless the authority model, data provenance, and execution graph are already instrumented.

Most organisations are not even halfway up. Policies and committees do not constitute machinery. A readiness questionnaire that stops at data quality and API posture is measuring the ground floor and calling it the whole building.

Layer 1: Application AI-Fitness

This is where most readiness assessments begin — and where most stop. The question is straightforward: can your software stack actually be used by AI in a sane way?

AI readiness is not just an organisational capability problem. It is also an application estate problem. A lot of AI strategy work focuses on the usual suspects — leadership alignment, governance, workforce readiness, data quality, risk controls. But there is another layer sitting underneath: whether the applications themselves are structurally compatible with AI-mediated operations.

An application AI-fitness assessment asks questions like:

- Does the system expose APIs, events, and structured actions — or only browser interaction?

- Are business objects and relationships externally accessible, or hidden behind screen logic?

- Can AI safely perform scoped actions with proper permissions and audit trails?

- Is the documentation sufficient for both humans and machines?

- Can interactions be logged, constrained, authorised, and audited?

If a critical application is not AI-ready, then every AI initiative touching that application becomes slower, more expensive, less reliable, and harder to govern. Bad application AI-fit is a portfolio risk — not just an integration inconvenience.

Gartner predicts that through 2026, organisations will abandon 60 per cent of AI projects unsupported by AI-ready data.4 Extend that logic to AI-ready applications, and the failure surface becomes even larger.

This is necessary work. But it is only Layer 1. Application fitness is the ground floor of a four-storey building.

Layer 2: Runtime-Safe Agent Execution

Once applications are machine-legible, the next question becomes: can the organisation safely run AI agents against those applications without trusting them not to do something catastrophic?

This is the SiloOS layer — runtime containment architecture for agents you cannot and should not trust. The principle is not “make AI trustworthy.” It is make trustworthiness irrelevant by enforcing architectural containment: scoped permissions, stateless execution, tokenisation of sensitive data, zero-trust agent identity, and blast-radius management.5

The urgency here is not theoretical. SailPoint’s 2025 research found that 80 per cent of organisations have already experienced unintended actions by AI agents — including accessing unauthorised systems (39%), downloading sensitive data (32%), and sharing restricted information (33%). Nearly a quarter reported agents being tricked into revealing access credentials.6

Without Layer 2, every autonomous tool call is an ungoverned execution event. Gartner predicts over 40 per cent of agentic AI projects will be cancelled by end of 2027 due to escalating costs, unclear business value, or inadequate risk controls.7

Organisations using tiered authorisation models experience 76 per cent fewer agent safety incidents than those using binary autonomous/non-autonomous approaches.8 The architecture matters more than the policy.

Microsoft’s release of the Agent Governance Toolkit in April 2026 — the first toolkit addressing all ten OWASP agentic AI risks with deterministic, sub-millisecond policy enforcement — signals that the market now recognises runtime safety as infrastructure, not an afterthought.9

Layer 3: Decision-Time Authority

Runtime containment keeps agents from going rogue. Decision-time authority ensures that every consequential AI action is structurally impossible without valid authorisation.

This is the difference between governance-as-documentation and governance-as-infrastructure. Most organisations have the first. Very few have the second.

Only 34 per cent of organisations with governance policies use any technology to actually enforce them.10 Three-quarters of organisations have AI usage policies, responsible AI principles, and governance charters — but none of these run at decision time. None can technically prevent an unauthorised action from executing.

“Governance that runs after the decision is already too late. Governance that runs with the decision changes system behaviour.”

— Compliance Cosplay, LeverageAI

Layer 3 requires what I’ve called the In-Path Authority pattern: runtime machinery where authority is not merely recorded but evaluated — where policy-as-code turns a delegated mandate into a machine-verifiable test at the moment of execution. Authority is potential; policy evaluation is activation.11

The compliance cosplay diagnostic makes this concrete with three questions:12

- Can your system technically prevent an unauthorised AI decision from executing right now?

- Can you prove — from system-generated evidence, not reconstructed logs — who had authority before it executed?

- If a regulator asked “show me the decision-time evidence chain,” could you produce it without investigation?

If the answer to any of these is no, the organisation has governance theatre, not governance infrastructure.

Layer 4: Proof-Carrying Receipts

The pinnacle of the staircase. This layer asks: can every consequential AI decision produce a portable, self-verifying evidence bundle?

A proof-carrying receipt — what I’ve described elsewhere as a Decision Attestation Package — cryptographically binds three things together:13

- Signed authority — who authorised this action, under what scope, at what time

- Signed data — what evidence was observed and used in the decision

- Signed graph — what deterministic machinery produced the outcome

The distinction from Layer 3 is subtle but important. Layer 3 prevents unauthorised execution. Layer 4 produces portable proof that the authorisation, evidence, and process were valid. Layer 3 is the lock on the door. Layer 4 is the receipt that proves the lock was checked, by whom, and what was behind it.

This matters because regulatory expectations are hardening. The EU AI Act reaches full enforcement for high-risk AI systems in August 2026, requiring documented risk management, automatic logging, human oversight, and safeguards for accuracy and robustness.14 APRA’s CPS 230 is already in effect for Australian financial services, with supply-chain compliance deadlines extending through July 2026.15 NIST’s NCCoE published a concept paper in February 2026 specifically on AI agent identity and authorisation — establishing that agent identity requires new infrastructure, not just policy.16

Under these regimes, “we’ll investigate and get back to you” may not be sufficient. The expectation is moving toward decision-time evidence chains producible on demand.

Gartner named Digital Provenance as a top strategic technology trend for 2026.17 The market is catching up to the architectural reality: monitoring tells you what happened; governance proves what was authorised.

Not many applications or infrastructure stacks measure up to Layer 4 today. That is expected. But organisations that do not know it exists as a destination are building readiness programmes that stop three floors short.

Who Owns What: The Ownership Mapping

The staircase model is useful. But it only becomes actionable when ownership is clear.

The most common failure mode is not “nobody cares about readiness.” It is “everyone cares, nobody owns it, and all four layers blur into a single governance committee that owns everything and delivers nothing.” McKinsey’s research shows that high performers are three times more likely to have senior leaders demonstrating clear ownership of AI initiatives.18 Fifty-eight per cent of leaders identify disconnected governance systems as the primary obstacle preventing them from scaling AI responsibly.19

Here is the ownership split:

| Layer | Primary Owner | What They Own |

|---|---|---|

| 1. Application Fitness | Portfolio Steward / CIO | Assess application AI-fit. Flag poor-fit systems. Feed AI-fitness into upgrade, renewal, replacement, and legacy decisions. Prevent new projects from deepening dependency on structurally unfit platforms. |

| 2. Runtime Safety | Enterprise Architecture / Security | Design cross-cutting future-state architecture for agent containment, tool mediation, identity, and isolation. Define reference patterns for governed agent operations. Own the transition roadmap from current estate to runtime safety. |

| 3. Decision Authority | Governance / Risk / CISO | Own the authority model, delegated authority policy, risk tiers, approval scopes, and policy-as-code enforcement. Design in-path authority that prevents unauthorised execution — not just logging it. |

| 4. Proof-Carrying Receipts | Governance / Risk (shared with EA) | Define receipt standards, non-repudiation requirements, evidence-bundle format, and audit infrastructure. Own the attestation architecture that makes decisions self-proving. |

| Projects | Delivery Teams | Consume the doctrine. Use application readiness findings, implement wrappers and safe tool layers, conform to authority and receipt requirements. Projects are consumers of the doctrine, not authors of it. |

The critical distinction is between owns and influences.

The portfolio steward owns application fitness and investment gating. He influences but does not own enterprise reference architecture, runtime controls, authority infrastructure, or receipt standards. Those require joint ownership across EA, security, risk, and governance.

“The portfolio steward does not have to own AI governance. He only has to make sure portfolio decisions are not made blind to it.”

That is the elegant split. It keeps the politics clean and the architecture honest.

What Happens When Layers Are Confused

Layer confusion creates predictable failure modes:

Completing Layer 1 and declaring total readiness

The organisation audits its applications, finds reasonable API posture, launches pilots — and discovers at production-gate that it has no runtime authority model, no containment architecture, no evidence infrastructure. The pilot worked in the sandbox. It cannot be deployed because Layers 2 through 4 do not exist. This accounts for the 80/70 paradox: 80 per cent of pilots fail to reach production despite 70 per cent succeeding technically.2

Building governance without runtime enforcement

The organisation writes policies, forms a committee, publishes an AI ethics charter. None of it executes at runtime. A rogue agent action occurs. The committee meets. There are no system-generated evidence chains. The discussion becomes “what do we think happened?” rather than “here is what the system proves.” Governance without staging is policy without enforcement.20

Assigning everything to one owner

A single “AI governance lead” or committee is handed all four layers. They are accountable for application assessment, runtime architecture, authority design, and receipt infrastructure. They have authority over none of these functions. Progress stalls because the scope exceeds any single role. The most common failure mode in enterprise AI is not “nobody cares” — it is everyone caring without anyone owning.

The Capital Context: Why This Is Urgent Now

The infrastructure is arriving whether enterprises are ready or not.

Hyperscalers are planning to spend nearly $700 billion on AI infrastructure in 2026 alone — Amazon projecting $200 billion, Google $175–185 billion, Meta $115–135 billion.21 The Stargate project targets $500 billion by 2029.22 Global enterprise AI spending will hit $665 billion in 2026, yet 73 per cent of deployments fail to achieve projected ROI.23

Capital has already decided this matters. The strategic bottleneck is no longer belief. It is enterprise translation — the ability to turn infrastructure availability into governed, valuable internal action.

Organisations that fail to map readiness across all four layers will find their AI programmes stranded against infrastructure they cannot safely use. The world is drowning in model spend, chip spend, and data-centre spend. Enterprises are still struggling with selection, governance, readiness, architecture, and value capture.

Self-Assessment: Which Step Are You On?

Layer 1 — Application Fitness

- Have you assessed your major applications for AI operability (API posture, model legibility, action safety, documentation quality)?

- Do your portfolio investment decisions — renewals, upgrades, replacements — include AI-fitness as an assessment criterion?

- Can you identify which applications would be structural blockers to AI-mediated operations?

Layer 2 — Runtime Safety

- Can your infrastructure run an untrusted AI agent with scoped permissions, stateless execution, and blast-radius containment?

- Do you have agent identity, tool mediation, and isolation architecture in place — or are agents operating with broad, unscoped access?

- If an agent accessed an unauthorised system tomorrow, would your architecture detect and prevent it?

Layer 3 — Decision-Time Authority

- Can your system technically prevent an unauthorised AI action from executing — not merely log it after the fact?

- Is authority evaluated at runtime through policy-as-code, or documented in PDFs that no machine reads?

- Can you prove from system-generated evidence who had authority before an action executed?

Layer 4 — Proof-Carrying Receipts

- Can every consequential AI decision produce a portable evidence bundle (signed authority, signed data, signed execution graph)?

- If a regulator asked for the decision-time evidence chain, could you produce it without investigation?

- Is your audit posture based on structured evidence replay, or forensic log reconstruction?

Most organisations will answer “yes” to some of the Layer 1 questions and “no” to nearly everything in Layers 2 through 4. That is not failure — it is honest positioning. The staircase gives permission to be at any step, as long as you know which step you are on and who is responsible for climbing the next one.

Being Ready Is Not the Same as Being Evangelical

The staircase is not a call to rush into AI. It is a call to understand readiness before urgency arrives.

Being ready for something does not mean you are asking for it. A portfolio steward who assesses application fitness is not advocating for uncontrolled AI rollout. An enterprise architect who designs runtime containment patterns is not predicting when agents will arrive. They are making sure the organisation is not structurally unready when the economics, governance, and competitive pressure make movement necessary.

Preparedness is not advocacy. It is stewardship.

In regulated industries especially, the winning frame is not AI adoption. It is AI preparedness. Responsive, not reactive. Prepared, not panicked. Informed, not evangelical.

“We don’t need to commit to AI deployment to justify understanding whether our application estate is fit for an AI-enabled future.”

An organisation is not AI-ready because its apps are accessible. It is AI-ready when applications, runtime controls, and proof-carrying governance all line up. The staircase shows where you are. The ownership mapping shows who climbs next.

Map your organisation against the staircase. Identify which layer is actually blocking you, and who should own the next step.

For a deeper look at application fitness assessment, read the full application readiness framework. For the governance architecture behind Layers 3 and 4, see The Governance Stack and Decision Attestation Packages.

Questions or want to discuss your readiness posture? scott@leverageai.com.au

References

- Deloitte. “State of AI in the Enterprise 2026.” — “Close to three-quarters of companies planning to deploy Agentic AI within two years, yet only 21% of those companies report having a mature model for agent governance.” deloitte.com/us/en/about/press-room/state-of-ai-report-2026.html

- AIM Research Councils. “AI Insights in 2025: Scale is the Strategy.” — “80% of AI pilots fail to reach production — despite 70% succeeding technically.” councils.aimmediahouse.com/ai-insights-in-2025-shows-scale-is-the-strategy/

- Cisco. “2024 AI Readiness Index.” — “Global AI readiness has actually declined from 2023 to 2024, with only 13% of companies today fully ready to capture AI’s potential — down from 14% a year ago.” newsroom.cisco.com/c/r/newsroom/en/us/a/y2024/m11/cisco-2024-ai-readiness-index-urgency-rises-readiness-falls.html

- Gartner. “Lack of AI-Ready Data Puts AI Projects at Risk.” February 2025. — “Through 2026, organizations will abandon 60% of AI projects unsupported by AI-ready data.” gartner.com/en/newsroom/press-releases/2025-02-26-lack-of-ai-ready-data-puts-ai-projects-at-risk

- Farrell, S. “SiloOS: The Agent Operating System for AI You Can’t Trust.” LeverageAI. — “AI security should be architected to make trustworthiness irrelevant by containing the agent’s blast radius through zero trust, statelessness, tokenization, and audit-first design.” leverageai.com.au/wp-content/media/SiloOS.html

- SailPoint. “AI Agents — The New Attack Surface.” May 2025. — “80% of organizations have encountered unintended actions by their AI agents… 96% of IT professionals consider AI agents a growing security risk.” sailpoint.com/identity-library/ai-agents-attack-surface

- Gartner. “Predicts Over 40% of Agentic AI Projects Will Be Canceled by End of 2027.” June 2025. gartner.com/en/newsroom/press-releases/2025-06-25-gartner-predicts-over-40-percent-of-agentic-ai-projects-will-be-canceled-by-end-of-2027

- Strata.io. “What is Agentic AI Security? A Guide for 2026.” — “Organizations using tiered authorization models experience 76% fewer agent safety incidents than those using binary authorization.” strata.io/blog/agentic-identity/8-strategies-for-ai-agent-security-in-2025/

- Microsoft. “Introducing the Agent Governance Toolkit.” April 2026. opensource.microsoft.com/blog/2026/04/02/introducing-the-agent-governance-toolkit-open-source-runtime-security-for-ai-agents/

- Dataversity. “AI Governance in 2026: Is Your Organization Ready?” — “Only 34% of organizations with governance policies use any technology to actually enforce them.” dataversity.net/articles/ai-governance-in-2026-is-your-organization-ready/

- Farrell, S. “Getting Enterprise AI-Ready: Governance as Code, Not Committees.” LeverageAI. leverageai.com.au/getting-enterprise-ai-ready-governance-as-code-not-committees/

- Farrell, S. “Compliance Cosplay: Why AI Governance Without Runtime Authority Is Theatre.” LeverageAI. leverageai.com.au/wp-content/media/Compliance_Cosplay.html

- Farrell, S. “AI Governance Means Signing the Authority, the Data, and the Graph.” LeverageAI. leverageai.com.au/ai-governance-means-signing-the-authority-the-data-and-the-graph/

- LegalNodes. “EU AI Act Compliance Guide 2026.” — “Rules for high-risk AI will come into effect in August 2026.” legalnodes.com/article/eu-ai-act-2026-updates-compliance-requirements-and-business-risks

- APRA. “2025-26 Corporate Plan.” — “CPS 230 took effect 1 July 2025, with pre-existing service provider contracts requiring compliance by July 2026.” apra.gov.au/apra-corporate-plan-2025-26

- NIST NCCoE. “Accelerating the Adoption of Software and AI Agent Identity and Authorization.” February 2026. nccoe.nist.gov/sites/default/files/2026-02/accelerating-the-adoption-of-software-and-ai-agent-identity-and-authorization-concept-paper.pdf

- Gartner. “Top Strategic Technology Trends for 2026: Digital Provenance.” gartner.com/en/documents/7031598

- McKinsey. “The State of AI 2025.” — “High performers are three times more likely than their peers to strongly agree that senior leaders demonstrate ownership of and commitment to their AI initiatives.” mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai

- Dataversity. “AI Governance in 2026.” — “58% of leaders identify disconnected governance systems as the primary obstacle preventing them from scaling AI responsibly.” dataversity.net/articles/ai-governance-in-2026-is-your-organization-ready/

- Opsima. “Enterprise AI Governance & Security: IT Framework 2026.” — “Governance without staging is policy without enforcement. Architecture does [prevent shadow AI deployments].” opsima.com/blog/industry-insights/enterprise-ai-governance-industrial-it/

- CNBC. “Tech AI spending approaches $700 billion in 2026.” February 2026. cnbc.com/2026/02/06/google-microsoft-meta-amazon-ai-cash.html

- Stargate LLC. “The Stargate project targets $500 billion in AI infrastructure investment by 2029.” en.wikipedia.org/wiki/Stargate_LLC

- AI Governance Today. “The $665 Billion AI Spending Crisis.” — “Global enterprise AI spending will hit $665 billion in 2026, yet 73% of deployments fail to achieve projected ROI.” aigovernancetoday.com/news/enterprise-ai-spending-crisis-2026

Discover more from Leverage AI for your business

Subscribe to get the latest posts sent to your email.

Previous Post

Getting Enterprise AI-Ready: Governance as Code, Not Committees