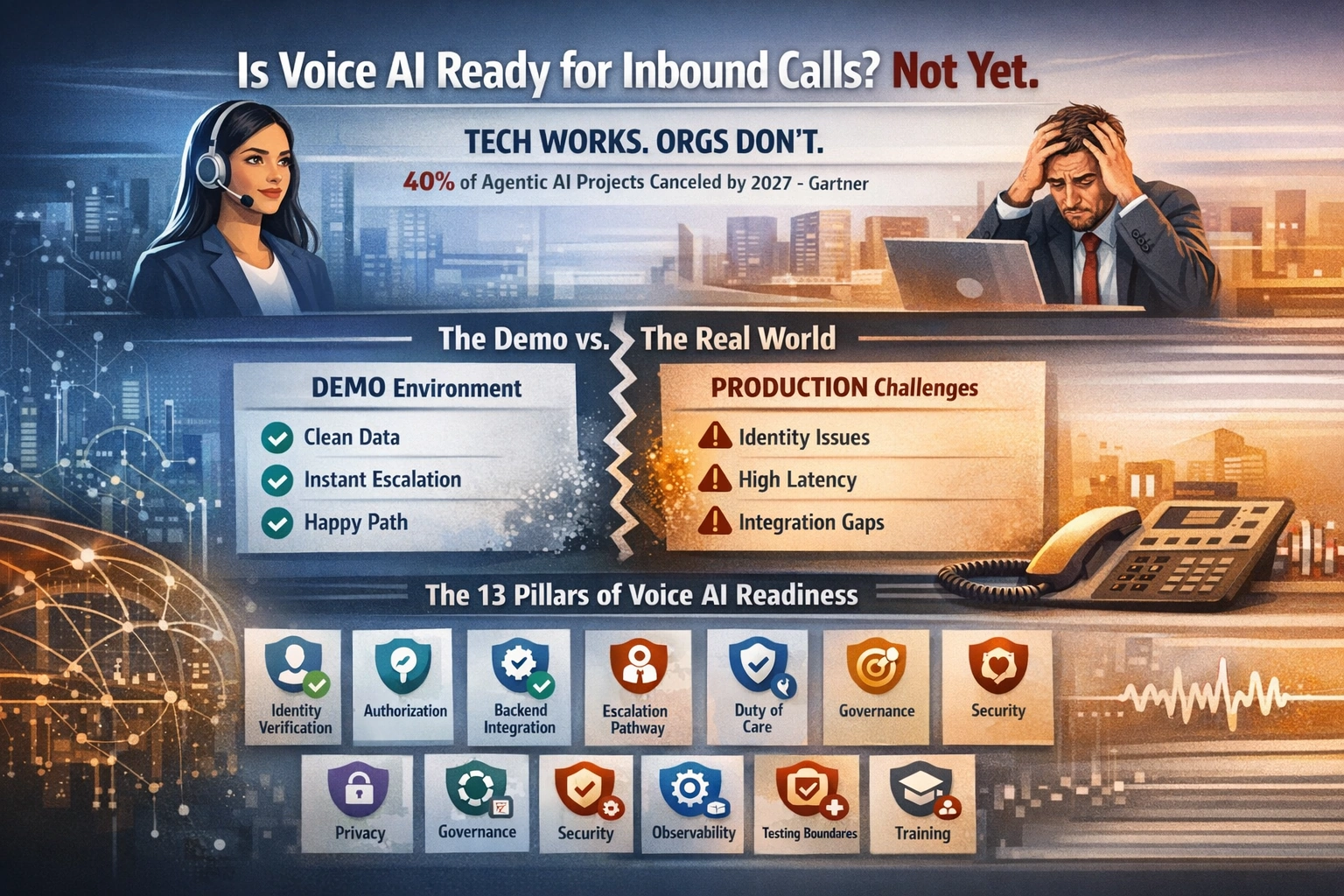

Is Voice AI Ready for Inbound Calls? Not Yet.

Voice AI demos are impressive. But moving from demo to production is where most organisations stumble. Gartner predicts over 40% of agentic AI projects will be cancelled by end of 2027 due to cost, unclear value, or inadequate risk controls.

📘 Want the complete guide?

Learn more: Read the full eBook here →

TL;DR

- Voice AI technology works – the models can hold natural conversations

- Organizations aren’t ready – they lack identity verification, authorization systems, escalation pathways, and privacy controls

- The 13 Pillars define what must exist before voice AI can work safely in high-stakes contexts

Here’s the uncomfortable truth: Voice AI can now hold remarkably natural conversations. Turn-taking is fluid. Responses sound human. The technology has crossed a threshold that makes vendor demos genuinely impressive.

And yet, enterprise AI pilots often stall after demos. Gartner predicts over 40% of agentic AI projects will be cancelled by the end of 2027.1

The failures aren’t accuracy problems caught in the lab. They’re latency and integration issues discovered only in production. Organizations buy voice AI expecting a “smart IVR upgrade” and instead discover they’ve signed up for a safety-critical, privacy-sensitive service redesign.

The hardest parts aren’t the model. They’re identity, authorization, duty-of-care pathways, and governance in organizations that often lack unified systems and staffed escalation routes.

The Demo-to-Deployment Gap

Voice AI demos hide a structural problem. In a controlled demo, the bot knows who you are. It has access to a clean test database. Every question has a happy path. Escalation goes to a human standing right there.

In production, none of that exists.

Gartner predicts 40% of agentic AI projects will be scrapped by 2027.3 On multi-step agent benchmarks like TheAgentCompany and OSWorld, end-to-end success rates remain low enough to make full autonomy risky without strong guardrails.4 These aren’t edge cases – they’re baseline performance when AI meets real-world complexity.

The pattern is consistent: across enterprise surveys, data quality and integration are consistently reported as major blockers to scaling AI beyond pilots. Not model capability. Not prompt engineering. Organizational readiness.

The Latency Budget Is Brutal

Human conversation timing is biological, not negotiable. When you ask someone a question, you expect a response within 500 milliseconds.5 When AI systems exceed this window, conversations feel broken and awkward.

Production voice AI agents typically aim for 800ms or lower latency for optimal user experience.6 But that 800ms isn’t just “thinking time” for the model. It’s the entire pipeline:

- Audio capture and encoding

- Transmission to server

- Speech-to-text conversion

- LLM generation time

- Text-to-speech synthesis

- Audio playback

End-to-end turn latency is often measured in seconds once you include telephony, STT, model time, and TTS—unless the system is engineered aggressively for low-latency.8 On telephony networks (PSTN), roughly 500ms of latency is introduced across the call path before any processing begins.8

That leaves a few hundred milliseconds for turn detection, retrieval, reasoning, and speech synthesis. Now add the work a voice agent actually needs to do:

- Identity verification (database lookup)

- Authorization checks (guardian status, permissions)

- System integration (scheduling, CRM)

- Policy enforcement (what the agent can and cannot do)

All of this cannot happen in 300 milliseconds. Latency has a disproportionate impact on perceived quality in real-time conversations. Once delays stretch into seconds, the interaction feels broken. A three-second delay mathematically guarantees a negative experience.

“Your voice bot isn’t failing because AI is slow. It’s failing because you’re making one brain do everything at once.”

– LeverageAI: Fast-Slow Split

The Thirteen Pillars of Voice AI Readiness

Before deploying voice AI for inbound calls, especially in regulated sectors like healthcare, aged care, or financial services, organizations need to assess readiness across thirteen dimensions. Not all must be perfect, but all must be considered.

Foundation Pillars (The Five)

1. Identity Verification

Reliably confirm who is calling.

Reality: Phone calls lack strong user-bound identity – just caller-ID and knowledge-based checks, both vulnerable to spoofing and social engineering.9

2. Authorization Lookup

Determine what this caller is allowed to do.

Reality: Requires accurate, accessible records of guardians, representatives, and permissions. Even if you correctly identify the human, you still need to answer: are they allowed to do this?

3. Backend Integration

A “truth source” the AI can act on.

Reality: Most organizations have fragmented systems with inconsistent data. Invoices might be in ERP, deliveries in logistics, identity somewhere else, and the “truth” in a spreadsheet someone emails on Tuesdays.

4. Escalation Pathway

Actual humans ready to receive handoffs.

Reality: Often assumed to exist but never funded or staffed. “Human in the loop” is the corporate equivalent of yelling “a wizard will fix it!” and discovering your wizard is a voicemail box with a 3-day SLA.

5. Duty-of-Care Response

What happens when a caller discloses distress?

Reality: Routing logic and crisis protocols rarely exist. If your only “pathway” is “transfer to the same queue” or “leave a message,” you’ve created a system that can identify emergencies and then do nothing – which is worse than not identifying them.

Extended Readiness Dimensions (The Eight)

6. Privacy Readiness

Minimize disclosure risk; design verification before revelation; enforce least-privilege.

Reality: Health information is sensitive under Privacy Act APP 11; “reasonable steps” required. Voice channels naturally leak PII/PHI – people blurt extra info, background voices exist, caller identity is ambiguous.10

7. Governance

Clear ownership, risk acceptance, controls, approvals, and continuous oversight across the lifecycle.

Reality: NIST AI RMF treats GOVERN as cross-cutting, not a one-off policy document. Who owns the voice AI when it makes a mistake? Who signs off on acceptable error rates?

8. Security & Abuse Resistance

Protect from spoofing, social engineering, prompt injection, and “agent jailbreaks.”

Reality: Attackers don’t need to hack the model; they just manipulate the workflow. AI-generated synthetic media (voice and video) creates new attack vectors for fraud and social engineering.

9. Observability & Auditability

End-to-end logs of what was heard, inferred, accessed, and acted upon – and why.

Reality: Without audit trails, you can’t investigate incidents or prove compliance. But detailed logs create privacy risk if they capture raw transcripts.

10. Evaluation & Testing Harness

Repeatable tests for privacy leakage, escalation correctness, policy adherence, edge cases, and regressions.

Reality: Most teams demo happy-path and ship without an eval suite. Then they discover failure modes in production, with real callers.

11. Incident Response & Rollback

Detection, containment, notifications, human takeover, and fast feature disabling.

Reality: Real-time systems need kill switches and playbooks, not post-mortems. What happens at 2am when the bot says something wrong?

12. Scope Boundaries & User Promises

What the agent will not do, cannot do, and will escalate.

Reality: Overpromising increases disclosure risk; underpromising reduces value. Calibrate wording carefully.

13. Change Management & Training

Staff training for warm handoffs, crisis scripts, escalation ownership; communications for clients/families.

Reality: Even a perfect model fails if humans aren’t trained to catch the baton.

The Verification-Before-Revelation Trap

To verify an appointment, the bot is tempted to say: “I can see you’re booked at 12 Smith Street at 10:30am tomorrow…”

But that’s already a privacy disclosure if the caller isn’t authorized. It’s also valuable intelligence for an abuser tracking someone’s schedule.

So you get the classic bind:

- To confirm identity, you want to reveal details

- To protect privacy, you must not reveal details until identity is confirmed

This is exactly where voice systems quietly bleed information. The design requirement is counterintuitive: verification must happen before any revelation, but verification itself requires some form of interaction that might disclose.

Why “Empathy” Can Be Dangerous

The False Reassurance Problem

Humans don’t parse phone calls like transcripts. They latch onto tone + the first clause.

So if the bot says: “That sounds awful. I’m here to help…” a stressed caller (or someone with hearing loss, cognitive impairment, or limited attention) can easily interpret that as “help is now being dispatched.” They may not absorb the next sentence: “…but I can’t assist with emergencies.”

In aged care, this is particularly dangerous. A caller with hearing issues or cognitive impairment might interpret empathetic language as “someone is coming to help me” when nothing is happening.

The very thing demos celebrate – “it sounded empathetic” – becomes a risk multiplier when the backend capability is missing. A human receptionist who can’t help tends to sound uncertain. A voice agent can sound calm, confident, and caring while still being operationally powerless.

Case Study: Uniting NSW/ACT

Ring-Fenced Success: Home-Care Cancellations

Uniting NSW/ACT deployed “Jeanie,” an AI voice agent on Webex Contact Center, to handle home-care appointment cancellations. The organization identified this specific use case after analyzing that routine cancellation calls consumed significant contact-centre resources.11

Results:

- 500 interactions in first production week

- ~50% fully resolved by AI

- Average handle time dropped to 3-3.5 minutes without queue delays

- 4.06/5 satisfaction score from elderly customers (ages 66-91)

Key Design Choices:

- Narrow scope: cancellations only – one of the few workflows with a reliable backend

- Explicit escalation: callers without customer ID immediately transferred to humans

- SMS confirmation: closed-loop verification of action taken

The most telling detail: they ring-fenced the use-case to routine cancellations, quantified it with analytics, and built explicit escalation when it gets messy.

The choice of “home care appointment cancellations” screams: This is one of the few things we can reliably action end-to-end. It’s not just good product scoping. It’s an admission that the rest of the organization is a fog of semi-structured reality.

“Gartner analysts warned that ‘fully automating customer interactions is neither technically feasible nor desirable for most organisations.’ Current AI cannot responsibly handle high-stakes scenarios involving personal health or emotional sensitivity.”

– techpartner.news11

The Point-Solution Trap

A ring-fenced bot is basically a single automated workflow with a voice front-end. That can still be worthwhile – if cancellations are frequent, costly, time-sensitive, and currently handled badly.

But it tends to have:

- High fixed implementation cost (telephony + security + integration + monitoring)

- Ongoing variable cost (minutes, STT/TTS, model tokens, vendor fees)

- A narrow ROI surface area unless it becomes a reusable platform

The strategic fork:

- “Solve one thing” – fast value, but you’re left with a brittle one-off

- “Build an agent platform” – slower, but each new workflow is cheaper and safer

Most organizations say they want the platform and then fund the one-off.

The Alternative: Augmentation Over Replacement

When automation is too risky, augmentation wins. The mental model shift:

- “AI answers the customer” – fragile, one-shot, high failure rate

- “AI supports the human answering” – robust, augmentative, plays to each party’s strengths

The highest-leverage voice AI projects in the near term tend to be:

- Agent assist: AI surfaces caller history, flags risk signals, suggests next actions during human-handled call

- Post-call automation: AI generates notes, CRM updates, task creation after human completes call

- Pre-call intake: Structured capture + routing with explicit boundaries

That’s where you get compounding wins without betting the brand on a real-time oracle.

Before Your Next Voice AI Conversation

Run your organization against the 13 Pillars. Which ones are you missing? Which gaps need closing before you spend?

The Punchline

A voice agent can’t substitute for missing organizational capability.

If there’s no real responder, “escalation” is just a nicer voicemail. If there’s no identity system, “verification” is security theater. If there’s no backend truth source, “action” is confident hallucination.

In aged care, that’s not a neutral failure mode. It’s a compliance, safety, and reputational landmine waiting to detonate.

The technology is ready. Your organization probably isn’t. The 13 Pillars tell you which gaps to close. Start there, not with the vendor demo.

References

- Gartner. “Gartner Predicts Over 40 Percent of Agentic AI Projects Will Be Canceled by End of 2027.” Press Release, June 25, 2025. gartner.com/en/newsroom/press-releases/2025-06-25-gartner-predicts-over-40-percent-of-agentic-ai-projects-will-be-canceled-by-end-of-2027

- MIT Project NANDA. Coverage: CIO Dive, “MIT study: AI investment returns remain elusive for most enterprises” (ciodive.com/news/MIT-study-AI-investment-returns-production/745810/) and Chanl.ai, “MIT’s Report on the State of AI in Business 2025” (chanl.ai/blog/mits-report-on-the-state-of-ai-in-business-2025-shows-why-ai-agents-are-so-hard-to-build). Directional, interview-based research on GenAI P&L impact.

- Gartner. (same as ref-1)

- Agent Benchmarks: TheAgentCompany (arxiv.org/html/2412.14161v2), OpenReview discussion (openreview.net/forum?id=LZnKNApvhG), OSWorld project (os-world.github.io). Multi-step agent success rates remain low across standardized benchmarks.

- AssemblyAI. “Low Latency Voice AI: The 300ms Rule.” assemblyai.com/blog/low-latency-voice-ai – “Human conversations naturally flow with pauses of 200-500 milliseconds between speakers.”

- Retell AI. “AI Voice Agent Latency Face-off 2025.” retellai.com/resources/ai-voice-agent-latency-face-off-2025 – Practitioner guidance on voice AI latency targets (800ms or lower for optimal user experience).

- Stivers et al. “Universals and cultural variation in turn-taking in conversation.” PNAS, 2009. pnas.org/doi/10.1073/pnas.0903616106 – Natural conversation turn-taking timing across cultures.

- Webex Engineering Blog. “Building Voice AI That Can Keep Up With Real Conversations.” blog.webex.com/engineering/building-voice-ai-that-can-keep-up-with-real-conversations/ – Technical analysis of latency components in voice AI systems, including PSTN contribution.

- Dock. “Call Center Authentication Solutions.” dock.io/post/call-center-authentication-solutions – “Fraudsters now use SIM swap attacks, CLI spoofing, and phishing to bypass traditional checks. Data breaches have made knowledge-based authentication (KBA) nearly useless.”

- OAIC. “Guide to Health Privacy.” oaic.gov.au/privacy/privacy-guidance-for-organisations-and-government-agencies/health-service-providers/guide-to-health-privacy – Australian privacy framework for health service providers.

- techpartner.news. “This is AI Speaking: What Uniting NSW/ACT’s Voice Bot Tells Us About the Future of Call Centres.” techpartner.news/news/this-is-ai-speaking-what-uniting-nswacts-voice-bot-tells-us-about-the-future-of-call-centres-622657 – Case study of narrow-scope voice AI deployment in aged care.

Related Reading

For additional context on the Fast-Slow Split architecture pattern referenced in this article, see: LeverageAI, “The Fast-Slow Split: Breaking the Real-Time AI Constraint” (leverageai.com.au/the-fast-slow-split-breaking-the-real-time-ai-constraint/)

Discover more from Leverage AI for your business

Subscribe to get the latest posts sent to your email.

Previous Post

Breaking the 1-Hour Barrier: AI Agents That Build Understanding Over 10+ Hours

Next Post

Don't Buy Software. Don't Hire Experts. Build AI Instead.