Getting Enterprise AI-Ready: Governance as Code, Not Committees

Why the enterprises going fastest with AI aren’t the ones with the loosest governance. They’re the ones that built governance into their infrastructure.

Scott Farrell · March 2026 · leverageai.com.au

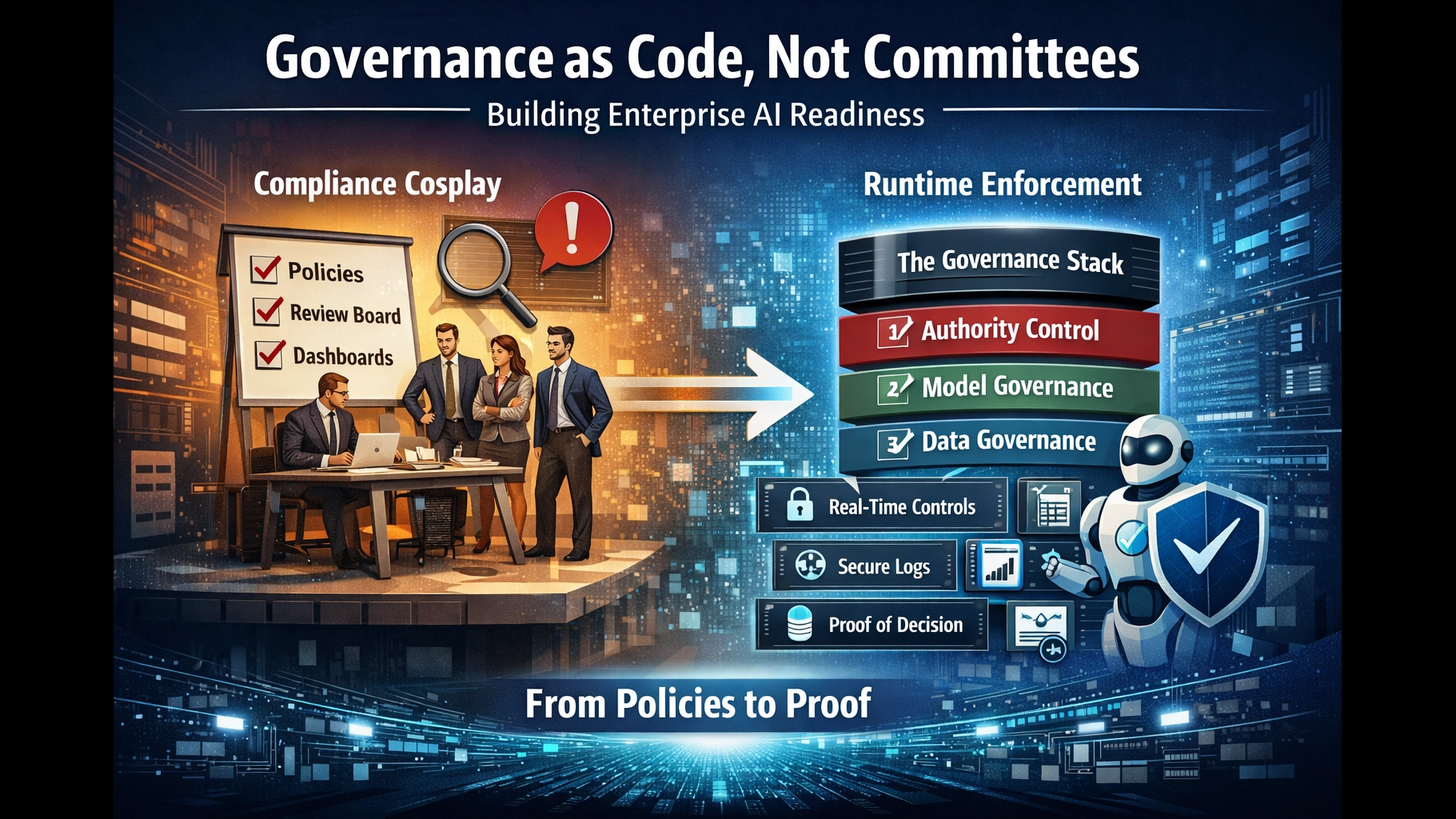

- Most enterprise AI governance is compliance cosplay: policies, committees, and dashboards that cannot prevent a single unauthorised AI action from executing.

- The governance stack has three layers. Most enterprises only built two. The missing layer — runtime authority infrastructure — is where governance works or fails.

- Organisations with comprehensive governance adopt agentic AI at nearly 4× the rate of those still developing policies. Governance is the accelerator, not the brake.

Imagine it’s a Tuesday morning. A regulator calls. An AI system your organisation deployed six months ago made a decision that affected 40,000 customers. The regulator asks three questions:

Who authorised this decision? Under what mandate? Where is the evidence?

If your governance consists of a policy document, a review board that meets monthly, and a monitoring dashboard that shows aggregate metrics, you cannot answer any of these questions from system evidence. You’ll need an investigation. Weeks of forensic reconstruction. Lawyers. And you still won’t be able to prove what happened at decision time, because nothing in your governance infrastructure was designed to capture it.

This is the state of enterprise AI governance in 2026. Nearly three-quarters of organisations plan to deploy autonomous AI agents within two years, yet only 21% report having the governance infrastructure to support them.1 The gap is not a documentation problem. It is an infrastructure problem.

And the evidence now shows something counterintuitive: the enterprises going fastest with AI are not the ones with the loosest governance. They are the ones that built governance into their architecture.

Compliance Cosplay: What Passes for Enterprise AI Governance

Most enterprises believe they have AI governance. They have:

- AI usage policies — responsible AI principles, governance charters, acceptable use documents

- Review boards — committees that meet monthly or quarterly to approve or review AI initiatives

- Monitoring dashboards — observability tools that show aggregate performance metrics

- Post-hoc explanations — explainability features that reconstruct why a model made a given decision

Three-quarters of organisations have established these kinds of governance artefacts.2 None of them are governance mechanisms. They are forensic artefacts — useful for understanding what happened after the fact, useless for preventing harm at the moment it matters.

This is compliance cosplay: governance that looks rigorous but has no runtime enforcement. It creates the appearance of control without the substance of it.

The UnitedHealth Test Case

UnitedHealth Group deployed an algorithm called nH Predict to manage Medicare Advantage claims. The system had everything a governance checklist could ask for: a stated policy that “AI does not make final coverage decisions,” audit logging, explainability features, and human oversight on paper.3

When patients appealed the AI’s denials, human reviewers overturned them nine out of ten times. The governance artefacts were all present. The governance enforcement was absent. In February 2025, a US District Court ruled that plaintiffs could proceed with their class action — finding that the company’s documented governance did not match what actually happened at decision time.3

The policy said one thing. The system did another. No infrastructure existed to close the gap.

The Diagnostic

Ask three questions of your current AI governance. If you cannot answer yes to all three from system evidence — not from policy documents, not from meeting minutes, but from the system itself — what you have is compliance cosplay.

- Can the system prevent an unauthorised AI action from executing before it executes?

- Can you prove authority — who authorised this specific decision, under what mandate — from system evidence alone?

- Can you produce the evidence chain — what data was used, what policy applied, what outcome was produced — without launching an investigation?

Fifty-eight percent of enterprise leaders identify disconnected governance systems as the primary obstacle preventing them from scaling AI responsibly.4 Gartner predicts that by 2027, 60% of organisations will fail to realise the expected value of their AI use cases due to incohesive governance frameworks.5

The problem is not that enterprises lack governance intentions. It’s that they lack governance infrastructure.

The Governance Stack: Three Layers, and You Only Built Two

Enterprise AI governance is not one problem. It is three stacked problems with different maturity requirements:

| Layer | What It Governs | Core Question | Enterprise Maturity |

|---|---|---|---|

| Layer 1: Data Governance | What data is true enough to use? | Is this data accurate, complete, and authorised for this purpose? | Partial — most enterprises have some data governance |

| Layer 2: AI/Model Governance | What is the model allowed to do? | Has this model been tested, validated, and approved for this use case? | Emerging — policies exist, enforcement is weak |

| Layer 3: Authority Infrastructure | Who may act, right now, and where is the proof? | Can this specific action execute under this authority, and can we prove it? | Virtually absent in most enterprises |

Most enterprises have partial Layer 1 and emerging Layer 2. Virtually none have built Layer 3 — the runtime authority infrastructure that answers the questions regulators actually ask.6

This matters because the accountability question is never “Was the model accurate?” The question is always: Who accepted this decision? Under what mandate? With whose authority? Where is the evidence?

The EU AI Act, which reaches full enforcement for high-risk AI systems in August 2026, requires exactly this: risk assessment, logging of activity to ensure traceability, detailed documentation, and appropriate human oversight measures.7 Singapore’s IMDA launched the world’s first governance framework specifically for agentic AI in January 2026, requiring organisations to “bound risks by design through controlling tool access, permissions, operational environments and the scope of actions.”8

Both converge on the same requirement: governance that operates at runtime, not governance that documents after the fact.

Layer 3 is where the fork happens. You are either in the Explanation Paradigm — governance that explains decisions after execution — or the Constraint Paradigm — governance that constrains decisions before and during execution. These are not a spectrum. They require different infrastructure.

Architecture, Not Vibes: Why Prompt-Based Governance Fails

There is a persistent belief in enterprise AI that better prompts, better system instructions, and better model alignment will create trustworthy systems. The evidence says otherwise.

Reinforcement-learning “investigator agents” jailbroke Claude Sonnet 4 at 92%, GPT-5 at 78%, and Gemini 2.5 Pro at 90% on high-risk tasks. Emoji smuggling achieved 100% evasion against six production guardrail systems including Microsoft Azure Prompt Shield.9 OWASP lists prompt injection as the number one risk in LLM applications — not an emerging concern, the top risk.10

This is not a failure of implementation. It is a structural problem. AI has zero internal accountability pressure — no career consequences, no reputational risk, no fear of termination. The mechanisms that keep humans honest do not exist for AI systems. Prompts are manners. Architecture is physics. Physics wins.

The Developer Analogy

Enterprises already know how to govern untrusted actors who write and execute code. We do it with developers every day. We don’t trust developers either — we test their code, run CI/CD pipelines, require code review, enforce deployment gates, scope permissions, and audit every change.

AI governance should work exactly the same way. Not by making the AI more trustworthy, but by making misbehaviour structurally boring — contained, scoped, auditable, and reversible by design.

Engineering Consensus

This is not one vendor’s opinion. AWS Distinguished Engineer Marc Brooker published the architectural principle in January 2026:

“The right way to control what agents do is to put them in a box. The box is a strong, deterministic, exact layer of control outside the agent which limits which tools it can call, and what it can do with those tools.”

— Marc Brooker, AWS, January 202611

AWS AgentCore, Singapore’s IMDA framework, and the NIST AI Risk Management Framework all converge on the same architectural truth: enforce outside the model, scope per task, log everything, treat the agent as untrusted by default.

The enterprise framing is simple: “Can’t” beats “shouldn’t.” Architecture that structurally prevents an unauthorised action is categorically stronger than policy that says an action shouldn’t happen. One survives a regulator’s first question. The other doesn’t.

Proof-Carrying Decisions: From Logs to Receipts

The difference between compliance cosplay and governance infrastructure comes down to a single distinction: logs versus receipts.

A log is a stream of events. When something goes wrong, you reconstruct what probably happened by correlating timestamps across multiple systems. It’s forensic archaeology — time-consuming, uncertain, and often incomplete.

A receipt is a self-contained proof object. It carries its own evidence. When something goes wrong, you don’t investigate — you verify. The decision itself tells you who authorised it, what data was observed, what policy applied, and what outcome was produced.

The Decision Attestation Package

In governed AI systems, the final product is not the decision. The final product is the decision plus its attestation — a portable, self-verifying proof object that bundles:

1. Observation — what evidence was presented to the system (data hashes, source identifiers)

2. Judgement — what the AI concluded and why (extracted facts, micro-judgements, confidence signals)

3. Authority — who had the mandate to act and under what delegation (signed authority chain)

4. Policy — what rules were in force at execution time (policy version, gating decision)

5. Outcome — what actually happened (approve, deny, escalate, with specifics)

6. Seal — cryptographic binding that makes the package tamper-evident

This draws directly from software supply chain security. The SLSA framework (Supply Chain Levels for Software Artifacts) provides standardised, incremental security levels for proving how software was built and that it hasn’t been tampered with.12 The same provenance discipline applies to AI decisions: prove who authorised, what data informed, what code executed, and what outcome was produced.

Most organisations are at SLSA Level 0 for decision provenance — no provenance at all. The practical target is Level 2: signed, tamper-evident, built on dedicated infrastructure. An incomplete package is infinitely better than none.

“Can we reconstruct what probably happened from logs?” is a fundamentally different question from “Can this package verify itself?”

— The distinction between forensic archaeology and governance infrastructure

The auditor doesn’t investigate. They verify. That’s the difference between governance that survives regulatory scrutiny and governance that triggers it.

Governance as Software: Nightly Builds, Regression Tests, Rollback

Governance is not just an approval function. It is an operating discipline. And software engineering solved the operating discipline problem two decades ago.

Your AI recommendation engine is a production system that emits decisions. Treat it like one. The same discipline that transformed software from “ship and pray” to “ship with confidence” applies directly to AI decision systems.13

The Parallel

| Software Engineering | AI Decision Governance |

|---|---|

| Nightly build | Overnight decision pipeline — batch process tomorrow’s recommendations tonight |

| Regression tests | Frozen inputs replayed against current model — did the same case produce the same output? |

| Canary release | 5% of accounts get the new model/policy version; monitor before full rollout |

| Rollback | Revert to previous model, prompt, or policy version if quality drops |

| Diff report | What changed between yesterday’s decisions and today’s — and why |

| Version control | Every policy change is versioned, reviewed, and traceable |

Policy as Source Code

Treat governance policies as source code. Compile them into versioned rule graphs. Run them through a CI/CD pipeline — tests, diffs, canaries, rollback. This prevents silent policy changes and makes governance auditable by construction.

The most successful AI teams share a counterintuitive approach: they embrace more governance, not less. Policy-as-Code embeds governance directly into development and deployment pipelines, strengthening trust and ensuring compliance at every stage.14

Why This Matters: Silent Degradation

AI systems don’t fail with error screens. They fail silently. A landmark MIT study examining 32 datasets across four industries found that 91% of machine learning models experience degradation over time. When models are left unchanged for six months or longer, error rates jump 35% on new data.13

Without nightly builds, regression tests, and drift detection, you won’t know your AI governance is failing until someone notices the outcomes have gone wrong. Capital One reported that implementing automated drift detection reduced unplanned retraining events by 73%, while decreasing the average cost per retraining cycle by 42%.13

Once you call it a “nightly build,” you inherit twenty years of software hygiene for free. The discipline exists. The playbook exists. You just need to apply it to decisions instead of deployments.

The Enabling Paradox: Governance Makes AI Faster

Here is the data point that should reshape every enterprise AI governance conversation:

Governance maturity is the single strongest predictor of secure, scalable AI adoption.15 Not model capability. Not budget. Not talent. Governance.

The pattern repeats across every major research house in 2026:

- PwC: CEOs with strong AI foundations — including responsible AI frameworks and technology environments — are three times more likely to report meaningful financial returns.16

- Gartner: Organisations that deployed AI governance platforms are 3.4 times more likely to achieve high effectiveness in AI governance.17

- Deloitte: “For agentic AI, governance and growth go hand in hand. In the AI era, governance is more than guardrails — it’s the catalyst for responsible growth.”1

- McKinsey: “Competitive advantage will depend on a sustained investment in fundamentals — organisations that build confidence through robust governance and controls will be better positioned to translate AI capabilities into performance gains.”18

The enabling paradox is this: governance designed as infrastructure does not slow AI adoption. It makes AI adoption possible. The enterprises stuck in pilot purgatory are not the ones with too much governance. They are the ones with the wrong kind of governance — committees instead of code, documents instead of infrastructure, explanation instead of constraint.

Build the Infrastructure Before You Ship the AI

The companies that treat AI governance as a Phase 2 problem pay $250,000+ to retrofit governance frameworks 18 months after deployment. The ones that build it in from Day 1 spend 60% less and deploy 40% faster.19

The governance infrastructure an enterprise needs before it can run serious AI safely:

- Complete the governance stack. Don’t stop at data governance and model policies. Build the authority infrastructure — Layer 3 — that proves who may act, under what mandate, with what evidence.

- Move from explanation to constraint. Design governance that runs with the decision, not after it. In-path authority, gated execution, scoped permissions. “Can’t” beats “shouldn’t.”

- Make decisions carry their own proof. Decision attestation packages — even incomplete ones — are infinitely better than reconstructing what probably happened from logs.

- Operate governance like software. Nightly builds. Regression tests. Canary releases. Drift detection. Rollback. Version-controlled policies treated as source code.

The organisations that go fastest with AI will not be the ones with the loosest governance. They will be the ones with the best governance infrastructure — invisible, automatic, and proof-carrying. Governance as code, not committees.

That is what getting enterprise AI-ready actually means.

How does your enterprise AI governance score on the three-question diagnostic?

Take the free AI Readiness Assessment or schedule a discovery call to discuss your governance infrastructure.

leverageai.com.au

References

- [1]Deloitte. “State of AI in the Enterprise 2026.” — “Nearly three quarters of organizations plan to deploy autonomous agents within two years, yet only 21% report having the proper governance in place.” deloitte.com/us/en/about/press-room/state-of-ai-report-2026.html

- [2]CSA + Google Cloud. “The State of AI Security and Governance.” December 2025. — “Only 26% of organizations report having comprehensive AI security governance policies in place, with another 64% saying they have some guidelines or are still developing them.” cloud.google.com/resources/content/csa-the-state-of-ai-security-and-governance

- [3]LeverageAI / Scott Farrell. “Compliance Cosplay: Why AI Governance Without Runtime Authority Is Theatre.” February 2026, citing Healthcare Finance News and DLA Piper. — “When patients appealed the AI’s denials, human reviewers overturned them nine out of ten times.” leverageai.com.au/compliance-cosplay-why-ai-governance-without-runtime-authority-is-theatre/

- [4]Aligne.ai. “The AI Governance Crisis Every Executive Must Address.” 2025, citing 2025 AI Governance Benchmark Report. — “58% of leaders identify disconnected governance systems as the primary obstacle preventing them from scaling AI responsibly.” aligne.ai/blog-posts/the-ai-governance-crisis-every-executive-must-address-in-2025

- [5]Gartner for IT Leaders. LinkedIn post, 2025. — “Gartner predicts that by 2027, 60% of organizations will fail to realize the expected value of their AI use cases due to incohesive ethical governance frameworks.” linkedin.com/posts/gartner-for-it-leaders_gartnerda-ai-data-activity-7364666422532141056-NzCh

- [6]LeverageAI / Scott Farrell. “The Governance Stack — Data Truth, Model Risk, and the Authority Layer Nobody Built.” February 2026. — “75% have AI policies, only 36% have formal governance frameworks, virtually none can prove decision-time authority.” leverageai.com.au/the-governance-stack-data-truth-model-risk-and-the-authority-layer-nobody-built/

- [7]European Commission. “EU AI Act — Regulatory Framework for AI.” — “High-risk AI systems are subject to strict obligations: adequate risk assessment, logging of activity to ensure traceability, detailed documentation, appropriate human oversight measures.” digital-strategy.ec.europa.eu/en/policies/regulatory-framework-ai

- [8]Baker McKenzie. “Singapore: Governance Framework for Agentic AI Launched.” January 2026. — “The framework suggests bounding risks by design by limiting what agents can do through controlling their tool access, permissions, operational environments and the scope of actions.” bakermckenzie.com/en/insight/publications/2026/01/singapore-governance-framework-for-agentic-ai-launched

- [9]LeverageAI / Scott Farrell. “AI Doesn’t Fear Death: You Need Architecture Not Vibes for Trust.” February 2026, citing Transluce research (Sept 2025) and arXiv (April 2025). — “Reinforcement-learning investigator agents jailbroke Claude Sonnet 4 at 92%, GPT-5 at 78%, and Gemini 2.5 Pro at 90%. Emoji smuggling achieved 100% evasion against six production guardrail systems.” leverageai.com.au/ai-doesnt-fear-death-you-need-architecture-not-vibes-for-trust/

- [10]OWASP. “LLM01:2025 Prompt Injection.” — “Prompt injection is the #1 risk in LLM applications for 2025.” genai.owasp.org/llmrisk/llm01-prompt-injection/

- [11]Marc Brooker, AWS Distinguished Engineer. “Agent Safety is a Box.” January 2026. — “The right way to control what agents do is to put them in a box. The box is a strong, deterministic, exact layer of control outside the agent.” brooker.co.za/blog/2026/01/12/agent-box.html

- [12]Practical DevSecOps. “SLSA Framework Guide 2026.” — “SLSA is a security framework designed to prevent tampering, improve integrity, and secure software artifacts throughout the development and distribution process.” practical-devsecops.com/slsa-framework-guide-software-supply-chain-security/

- [13]LeverageAI / Scott Farrell. “Nightly AI Decision Builds: Backed by Software Engineering Practice.” January 2026, citing MIT study and Capital One. — “91% of machine learning models experience degradation over time. Capital One: automated drift detection reduced unplanned retraining events by 73%.” leverageai.com.au/nightly-ai-decision-builds-backed-by-software-engineering-practice/

- [14]Ethyca. “Governing Enterprise Data & AI with Policy-as-Code.” September 2025. — “The most successful AI teams embrace more governance, not less. Policy-as-Code embeds governance directly into development and deployment pipelines.” ethyca.com/news/how-to-govern-data-and-ai-with-a-policy-as-code-approach

- [15]CSA + Google Cloud / Unleash. “AI Governance Starts at Runtime.” February 2026. — “Among organizations with comprehensive governance policies, 46% have already adopted agentic AI. Among those with policies still in development, only 12% have.” getunleash.io/blog/ai-governance-starts-at-runtime

- [16]PwC. “2026 Global CEO Survey.” January 2026. — “CEOs whose organizations have established strong AI foundations are three times more likely to report meaningful financial returns.” pwc.com/gx/en/news-room/press-releases/2026/pwc-2026-global-ceo-survey.html

- [17]Gartner, survey of 360 organizations, Q2 2025, via MCP Manager. — “Organizations that deployed AI governance platforms are 3.4 times more likely to achieve high effectiveness.” mcpmanager.ai/blog/ai-governance-statistics/

- [18]McKinsey. “AI’s Next Act.” January 2026, via LeverageAI AI Executive Brief. — “Competitive advantage will depend on a sustained investment in fundamentals rather than incremental experimentation.” leverageai.com.au/the-ai-executive-brief-january-2026-what-big-consulting-is-saying/

- [19]BrainCuber. “AI Regulations 2026: What US Businesses Must Know.” — “Companies that build governance in from Day 1 spend 60% less and deploy 40% faster.” braincuber.com/blog/ai-regulations-2026-what-us-businesses-need-to-know

Discover more from Leverage AI for your business

Subscribe to get the latest posts sent to your email.

Previous Post

You Don't Have an AI Problem. Your Enterprise Has an Architecture Problem.