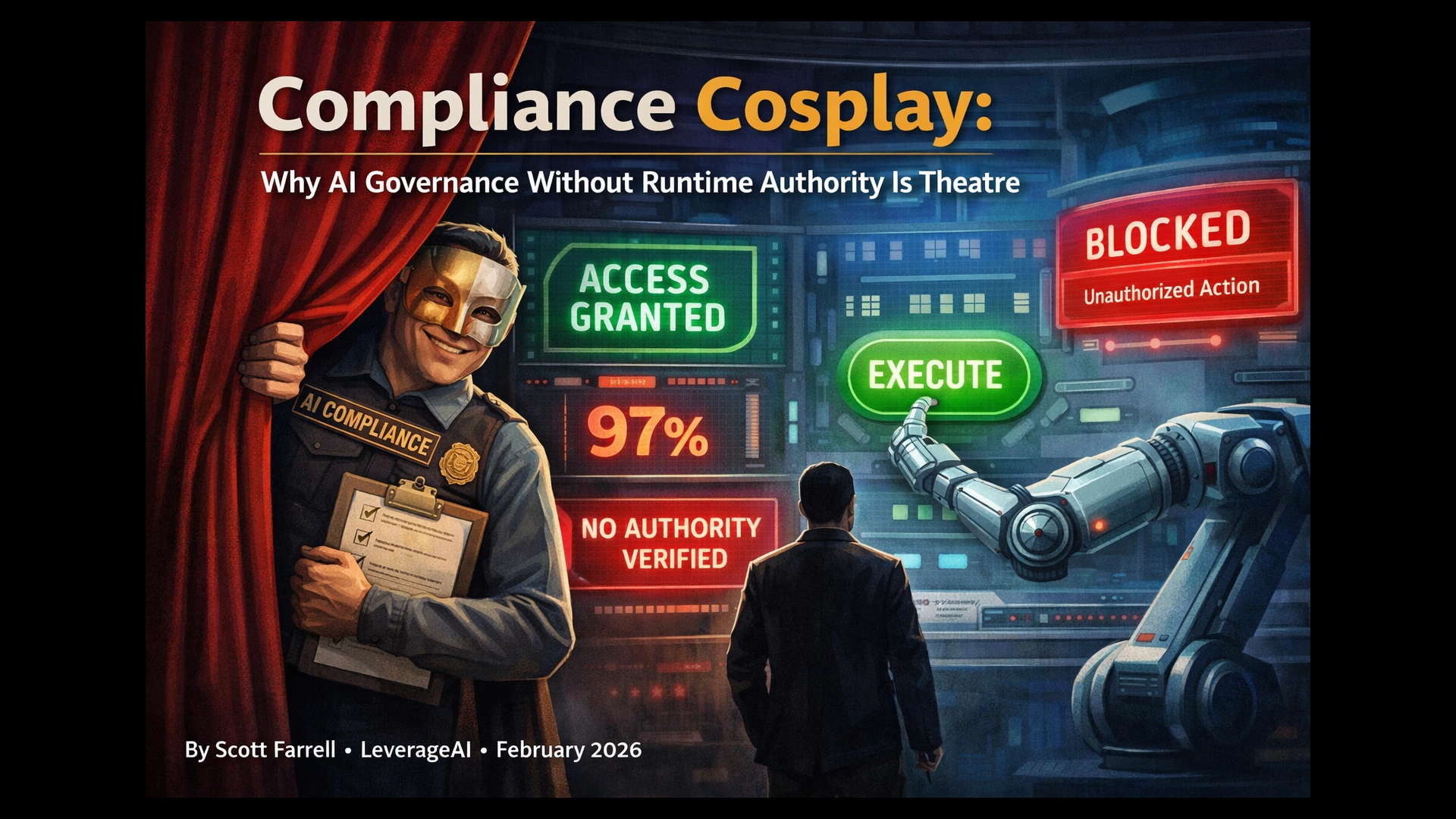

Compliance Cosplay: Why AI Governance Without Runtime Authority Is Theatre

📘 Want the complete guide?

Learn more: Read the full eBook here →

Most AI governance fails at runtime. Not because policies are missing. Not because principles haven’t been documented. Because at the moment a decision executes, nothing technically prevents an unauthorised action from going through.

You have governance frameworks. You have monitoring dashboards. You have post-hoc audit logs and model explainability tools. And yet, if someone in your organisation asked right now — “who authorised this specific AI decision, under what mandate, with whose authority, at exactly that moment?” — most teams would reach for log files and start reconstructing.

That reconstruction exercise is forensic archaeology. It is not governance. And the gap between what organisations document and what they can actually enforce is widening every quarter that autonomous AI systems proliferate without a runtime authority plane.

This is compliance cosplay. It looks right. It photographs well for auditors. And it cannot technically prevent a single unauthorised decision from executing.

The Governance Gap: Why Post-Hoc Always Fails Structurally

Here is the brutal version of where the industry sits. Only 7% of organisations have fully embedded AI governance despite 93% using AI in some capacity.1 Among organisations that experienced AI-related breaches, 97% had no proper AI access controls in place — despite having policies.2 Seventy per cent lack optimised governance entirely, meaning they cannot demonstrate board-level risk reviews, automated monitoring, or policies updated after incidents.3

The governance gap is not primarily a policy problem. It is an enforcement problem. Organisations write policies and then discover they have no mechanism to make those policies binding at the point where it matters — execution.

The UnitedHealth nH Predict case makes this concrete. UnitedHealth deployed an AI system to guide Medicare Advantage claim decisions. The governance position was explicit: the AI guides but does not make final decisions. Humans retain authority. The policy was documented.

Then a STAT investigation found that UnitedHealth was setting targets for employees to keep patient rehabilitation stays within 1% of nH Predict’s predictions.4 Plaintiffs alleged the system was used “systematically and without proper oversight.”5 The metric that crystallised the failure: the algorithm had a 90% overturn rate on appeals — nine out of ten times a human reviewed the AI’s denial, they reversed it.6 The US District Court for Minnesota allowed the class action to proceed, finding that breach of contract claims involving the alleged use of AI “in lieu of physicians” were legally viable.7

What failed at UnitedHealth was not the absence of a policy. The policy existed. What failed was the absence of any structural mechanism that could enforce that policy at the point of decision — a gate that could look at a specific claim, a specific patient, a specific AI output, and ask: does authority exist to execute this action, right now, under the rules that govern this context?

Without that gate, the policy is a document. The log is a forensic artefact. The audit trail is evidence gathered after something has already happened. None of it governs. None of it constrains.

“If none of it is wired into a pre-execution authority gate, then at runtime the real rule is: ‘If the tool allows it and no one intervenes, it goes.’ From a regulator’s perspective, that’s governance in name only.”

— Patrick McFadden, Thinking OS (February 2026)8

The model explainability story compounds this. Financial services discovered early that model-agnostic explainability methods — SHAP, LIME — often provide insufficient detail for regulatory requirements.9 Meta’s researchers demonstrated in a 2023 study that these techniques explained less than 40% of model behaviour for complex decisions.10 The Bank for International Settlements’ Financial Stability Institute confirmed that explainability remains the top issue discussed between regulators and firms in relation to AI.11

And here is the problem with asking an AI to explain itself: if you send a whole complicated prompt and a large context of data, you cannot even reliably ask the AI why it made a decision. It might not be able to reliably tell you what data it used. Self-reported explanations are not governance. They are vibes-based auditing.

This is the structural failure at the heart of post-hoc governance. Governance that runs after the decision is already too late. Governance that runs with the decision changes system behaviour. Those are not equivalent things.

Governance Has Gravity

There is a principle worth naming clearly: governance has gravity — it gets pulled to wherever execution happens.

Traditional IT systems execute deterministically. The code does what the code says. Because behaviour is predictable, governance can live upstream — in the SDLC, in change management processes, in pre-deployment reviews. You review the code before it ships. The code ships and does exactly what it was reviewed to do. Governance upstream is sufficient because determinism means nothing unexpected happens at runtime.

Real-time AI breaks this model at the foundation. An AI system does not execute a fixed function. It generates a response based on whatever context, data, and prompting it receives at that moment. The same model, the same policy document, the same system prompt — different context, different outcome. The decision space is not pre-auditable. You cannot review all possible decisions in advance because the decision depends on inputs that arrive at runtime.

Business users will push towards real-time AI. That is where the value is. That is exactly where traditional IT governance and AI governance diverge completely. And the divergence creates what might be called a governance arbitrage: organisations route governance through existing SDLC processes because that is where they know how to do it — and leave the runtime decision space ungoverned.

Gartner projects that by 2026, over 40% of enterprise workflows will involve autonomous agents.12 Every autonomous tool call by every agent is, under current governance frameworks, an ungoverned execution event. The agent calls a tool. The tool executes. Nobody checked, at the moment of execution, whether the authority existed. Nobody verified whether the data used was admissible. Nobody confirmed whether the risk threshold had been cleared.

Singapore’s Infocomm Media Development Authority published the world’s first agentic AI governance framework in January 2026, explicitly acknowledging that agents’ “access to sensitive data and ability to make changes to their environment raises a whole new profile of risks” where “complex interactions among agents increase substantially the risk of outcomes becoming more unpredictable.”13 UC Berkeley’s Centre for Long-Term Cybersecurity, publishing in February 2026, found that unique agentic challenges “complicate traditional, model-centric risk-management approaches and demand system-level governance.”14

The direction of travel is clear. Governance must follow execution. You cannot govern what you cannot constrain.

of organisations that experienced AI-related breaches had no proper AI access controls in place — despite having policies.2

The three weaknesses at the heart of this are structural, not accidental:

- Untraceable Authority. At the moment of decision, there is no cryptographic or deterministic record of who authorised the action, under what mandate, with whose delegated power. The authority is assumed, not verified.

- Incalculable Risk. Post-hoc monitoring cannot compute risk before execution. It can only measure what happened after. For irreversible decisions — a claim denial, a credit rejection, a patient discharge recommendation — that is too late.

- Irreversible Decisions. The most consequential AI decisions are also the ones that cannot be undone once executed. Post-hoc governance cannot un-execute a decision. It can only generate evidence about what went wrong.

Logs generated after execution are forensic artefacts. They are necessary. They are not governance mechanisms.

Zero Trust for Decisions

The architectural shift required here is not without precedent. It has already happened once, in network security, and the pattern is directly transferable.

In 2010, John Kindervag, then a principal analyst at Forrester Research, coined the term “zero trust.”15 His core assertion was simple and devastating: “Trust is a vulnerability.” Perimeter-based security assumed that anything inside the network could be trusted. The perimeter was the governance boundary. Get through the firewall and you were in.

The problem Kindervag named was identical in structure to the AI governance problem: the governance mechanism (the perimeter) was external to the resource it was supposed to protect. Once you were past it, nothing verified your authority at the point of access. Breaches did not require attacking the perimeter — they required compromising any node that had perimeter access. One credential, one misconfigured endpoint, and the “governance” of the perimeter became irrelevant.

Google’s BeyondCorp initiative, demonstrated from 2014, implemented the architectural alternative: replace perimeter-based trust with identity and device verification at every access point.15 NIST SP 800-207, published in 2020, codified zero trust as a federal standard: no entity is inherently trusted, every access request is verified at point of access, every scope is enforced regardless of network location.16

The principle translated directly to AI governance is this: models don’t get to “do” — they only get to “suggest.” And every suggestion hits an enforcement boundary before execution.

The perimeter-to-zero-trust shift replaced “trust the network” with “verify at point of access.” The governance-to-enforcement shift replaces “trust the policy document” with “enforce at point of decision.” The structure of the problem and the structure of the solution are the same.

Current AI governance is perimeter-based. The policy lives outside the system. The principles document lives outside the system. The monitoring dashboard lives outside the system. And if you can get a decision past the perimeter — which is trivially easy because the perimeter is not technically integrated with execution — you are in. The system will execute. Nothing stops it.

Zero trust for decisions says: the LLM is a proposer, a drafter, a classifier. The enforcement gate is the arbiter. No proposal executes without passing through the gate. The gate is deterministic. The gate checks authority. The gate checks admissibility. The gate checks risk. The gate produces evidence. And the gate — not the model — decides whether execution proceeds.

“Policy says: ‘You shouldn’t do X’ (detection after the fact). Architecture says: ‘You can’t do X’ (prevention by design). Governance embedded in infrastructure doesn’t need checklists. The system is the compliance.”

— SiloOS framework, LeverageAI17

Can’t beats shouldn’t. Every time.

Financial services has understood a version of this for over a decade. SR 11-7, issued by the Federal Reserve in April 2011, established that any “quantitative method, system, or approach that applies statistical, economic, financial, or mathematical theories to process input data into quantitative estimates” requires independent validation, ongoing monitoring, and governance with board-level oversight.18 This is a probabilistic engine governance framework. AI systems are probabilistic engines. The regulatory concept is not new — but only 44% of banks currently properly validate AI tools under this mandatory framework.19

If governed financial services, with a decade of SR 11-7 precedent, still has a 56% validation gap, the picture for unregulated AI governance should concern every CTO and CISO in a regulated industry.

The Four Pillars of Decision Authority Infrastructure

What does runtime governance actually look like? Not as a policy aspiration, but as an architecture that runs with the decision?

The pattern is an In-Path Authority (IPA) gate — a deterministic, model-agnostic enforcement layer that sits between the AI’s output and system execution. The LLM is the proposer. The IPA is the enforcer. The LLM’s role is to propose, draft, classify, and reason. The IPA’s role is to enforce authority, check admissibility, assess risk, gate execution, and produce evidence. Those are distinct functions that must not be conflated.

If you give the model a giant blob of context and then ask for reasons, you are hoping it self-reports accurately. Sometimes it will. Sometimes it will confabulate. That is not governance. The model must produce a structured decision object that can only reference evidence identifiers — not freeform reasoning that may or may not accurately reflect what influenced the output.

Decision Authority Infrastructure rests on four pillars, each of which must be enforced at decision time, not documented after it.

Pillar 1: Admissible Knowledge

What is allowed to matter in this decision? The IPA assembles a curated evidence bundle — a signed set of artefacts with identifiers, hashes, source references, and timestamps — that defines the complete and verifiable input set for a given decision. The model does not get access to a vast uncontrolled context. It gets access to the admissible evidence bundle for this decision type, in this context, under these rules. If a data source is not in the bundle, it cannot influence the decision. This makes the evidence deterministic and bounded rather than probabilistic and unbounded.

Pillar 2: Fixed Authority

Who has the right to decide, verified before execution? Authority is not assumed from the fact that the system accepted the request. It is verified by the IPA at decision time — checking that the action falls within the delegated mandate of the requesting entity, that the authority has not expired or been revoked, and that the scope of the action is within the boundary of what has been authorised. This is the AI equivalent of the BeyondCorp principle: identity and scope verification at every access point, not trust by default.

Pillar 3: Gated Execution

ALLOW, PAUSE, or DENY before execution. The IPA enforces a three-outcome gate at decision time. ALLOW proceeds to execution when authority, admissibility, and risk thresholds are met. PAUSE routes to human review when parameters fall outside automated approval bounds — high stakes, irreversibility, ambiguous authority. DENY blocks execution when authority is missing, evidence is inadmissible, or risk threshold is exceeded. This is not a soft guardrail. It is a hard execution constraint. The LLM cannot bypass it because the IPA controls execution, not the LLM.

Pillar 4: Provable Evidence

Decision trace produced by design, not investigation. Every decision that passes through the IPA produces a signed receipt: what ran, with what evidence bundle, under what authority, at what time, with what outcome. This is not a log generated for possible future use. It is a governance artefact generated as a mandatory output of every decision execution. When someone asks “who authorised this decision?” the answer is built in. No forensic archaeology required. No reconstruction from fragmented logs. The evidence exists as a first-class artefact produced by the infrastructure itself.

The most powerful external validation of this architecture comes from an independent practitioner source. Patrick McFadden at Thinking OS (February 2026) independently derived the same three-layer structure from first principles: Propose (intelligence layer), Commit (authority gate), Remember (audit trail).8 His observation: “Most organisations are pouring effort into #1 and #3. The next wave of scrutiny — from boards, regulators, and insurers — is going to land squarely on #2: ‘Who actually had the authority to let this action execute, and where is the record that proves it?'”8

The Commit layer is the IPA. Almost nobody clearly owns it. That is the gap.

The four pillars resolve the three structural weaknesses named earlier: Admissible Knowledge addresses untraceable evidence, Fixed Authority addresses untraceable mandate, Gated Execution prevents irreversible execution without authority, and Provable Evidence converts forensic archaeology into by-design governance output.

Related: Architecture, Not Vibes

The trust hierarchy in Architecture, Not Vibes maps directly to the governance problem: Level 1 (Vibes — policies, prompts, principles documents) is fragile. Level 2 (Monitoring — dashboards, observability) is reactive. Level 3 (Architecture — containment, scoping, structural enforcement) is the only level that produces structural guarantees. Compliance cosplay lives at Levels 1 and 2. Decision Authority Infrastructure operates at Level 3. The principle is the same: scope permissions, not behaviour. Define what AI can do, not what it should do. Enforce policy outside the LLM — deterministic gateways, not probabilistic prompts.

Related: Agent Provenance Stack

The four-layer provenance model in Agent Provenance Stack addresses the agent-platform version of this problem: Identity (who is making the request?), Intent (what are they allowed to ask for?), Artifact (can you trust what’s running?), Execution (can you prove what happened?). As that article notes: “Containment — what an agent can do — is advancing. Provenance — who authorised the action — is missing everywhere.” Decision Authority Infrastructure is the enterprise governance complement to provenance: the IPA pattern applies the same authority-verification logic to business decisions, not just agent tool calls.

From Explanation to Constraint: What Actually Changes

The distinction between explaining decisions and constraining decisions is not a subtle one. It determines whether governance is real or performed.

Explaining decisions means: after the system produces an output, we generate a description of what influenced it. We attribute factors. We weight features. We ask the model to account for itself. We review the log. This is useful for post-hoc investigation. It tells you what happened. It cannot change what happened. And as the evidence shows, it often cannot even accurately describe what happened — the 40% explanation coverage limit from Meta’s research is a hard ceiling on what self-reported model explanations can provide.10

Constraining decisions means: the system cannot produce certain outputs at all. Before execution proceeds, authority is verified, evidence admissibility is enforced, risk thresholds are cleared, and a signed execution receipt is produced. The decision space is bounded by the enforcement infrastructure, not by the model’s self-assessment of what it should or should not do.

Regulators are moving — slowly but unambiguously — towards constraint language. The EU AI Act’s Article 14 requires that oversight measures be “identified and built, when technically feasible, into the high-risk AI system by the provider” — not merely available as post-hoc investigation tools.20 It requires that natural persons be enabled to “decide, in any particular situation, not to use the high-risk AI system or to otherwise disregard, override or reverse the output” — structural capability, not advisory permission.20 For high-risk biometric identification systems, Article 14 goes further: no action proceeds without dual human verification at decision time.20

Article 12 mandates logging — forensic readiness.21 Article 17 mandates a documented quality management system — process governance.22 Article 14 mandates structural human oversight built into the system itself. These are three different things. The Act requires all three. Most organisations are building only the first two.

The EU AI Act becomes fully enforceable in August 2026, with penalties reaching €15 million or 3% of worldwide annual turnover.23 The first major enforcement actions are expected to target high-risk systems in hiring or credit — with immediate compliance acceleration globally anticipated upon the first significant fine.24

The governance direction from multiple converging sources — Singapore IMDA, UC Berkeley, the EU AI Act, SR 11-7’s extension to AI — is consistent: define action boundaries requiring human approval before execution, not human review after execution.13 Effective AI governance in 2026 will look much more like an operating model than a policy deck — “clearly defined boundaries for autonomous action, explicit escalation paths for human oversight, and transparent validation of AI models and decisions.”25

The most revealing test for any AI governance claim is this: can the system prevent the decision, or can it only describe it afterwards? If it can only describe, you have post-hoc explanation. If it can prevent, you have governance. The difference between those two things is not a matter of degree. It is a structural distinction that determines whether your organisation can answer “who authorised this?” with evidence, or with an investigation.

Governance that runs after the decision is already too late. Governance that runs with the decision changes system behaviour. Only one of those is governance.

The Architecture That Closes the Gap

Decision Authority Infrastructure is not another policy layer. It is an execution constraint. The architecture is model-agnostic, which means it does not depend on which LLM you are running. It is non-autonomous, which means the IPA gate does not itself make decisions — it enforces the decision authority of those who have it. It is authority-aware, which means it checks delegated mandates, not just permissions. And it is irreversibility-sensitive, which means it applies the most stringent gating to the decisions that cannot be undone.

The operational effect is the resolution of the three contradictions that governance theatre produces:

Governance rigour versus deployment speed. Runtime governance infrastructure enables faster autonomous operation, not slower. A highway with structural guardrails is faster than a highway with a human flagging every car. When authority is verified by structure, decisions within the authorised envelope proceed without committee review. Only decisions that fall outside the envelope pause for human assessment. Structural governance is a throughput enabler, not a throughput blocker.

AI autonomy versus human accountability. Decision Authority Infrastructure resolves this by making accountability a property of the architecture, not a property of the human org chart. The system produces provable evidence of authority at decision time. Accountability is not a retroactive investigation — it is a structural output. The question “who is accountable?” has a deterministic answer because the architecture produces that answer as a mandatory artefact of every decision.

Real-time execution versus governance depth. Runtime governance runs with the decision, not after it. Policy-as-code implementations can enforce governance checks in parallel with inference requests with sub-millisecond latency impact.26 The performance argument against runtime governance is a solved problem. The remaining argument is architectural: organisations have not built the IPA layer, not because it is impossible, but because they have not prioritised it over the easier path of documentation and monitoring.

The biggest risk in AI is not the model. It is the authority we give it without verifying that the authority existed.

What To Do With This

The framework is not theoretical. Zero trust networking was theoretical before Kindervag named it and Google proved it. SR 11-7 made probabilistic engine governance operational in financial services over a decade ago. The IPA pattern — LLM as proposer, deterministic gate as enforcer — is implementable with current technology. AWS AgentCore demonstrates deterministic policy enforcement at the gateway for AI agents.27 Policy-as-code frameworks applied to AI deployment pipelines extend this further.26

What is missing, in most organisations deploying AI-assisted decisions in regulated industries, is the commitment to build the enforcement layer rather than the documentation layer. The commitment to treat governance as infrastructure rather than compliance artefact. The commitment to ask — before the first regulatory inquiry, before the first audit failure — whether the governance documented can actually prevent what it claims to govern.

If you are working with AI systems where decisions cross legal, operational, or ethical boundaries, the diagnostic question is simple: what technically prevents an unauthorised decision from executing right now?

If you need to think about it, you have your answer.

The next article in this series examines how Decision Authority Infrastructure connects to the decision decomposition problem — how to break AI-assisted decisions into separately governable units, each with its own evidence bundle and execution gate, so that governance is tractable rather than theoretical.

Scott Farrell is the founder of LeverageAI, where he works with regulated-industry organisations on AI architecture and governance infrastructure. Prior articles: Architecture, Not Vibes · Agent Provenance Stack · The Simplicity Inversion

References

- [1]Knostic. “The 20 Biggest AI Governance Statistics and Trends of 2025.” knostic.ai/blog/ai-governance-statistics (November 2025) — “Only 7% of Organizations Have Fully Embedded AI Governance, Despite 93% Using AI in Some Capacity. Trustmarque’s 2025 AI Governance Report reveals that governance maturity remains minimal, with fewer than one in ten organizations integrating AI risk and compliance reviews directly into development pipelines.”

- [2]Knostic. “The 20 Biggest AI Governance Statistics and Trends of 2025.” knostic.ai/blog/ai-governance-statistics (November 2025) — “97% of Organizations in AI-Related Breaches Lacked Proper Access Controls. According to IBM’s 2025 Cost of a Data Breach Report, 13% of organizations reported breaches involving AI models or applications. Among these, 97% said they had no proper AI access controls in place.”

- [3]Acuvity. “2025: The Year AI Security Became Non-Negotiable.” acuvity.ai/2025-the-year-ai-security-became-non-negotiable/ — “Acuvity’s research found 70% of organizations lack optimized AI governance — meaning they can’t demonstrate board-level risk reviews, automated monitoring, or policies updated after incidents.”

- [4]Healthcare Finance News. “Class action lawsuit against UnitedHealth AI claim denials advances.” healthcarefinancenews.com/news/class-action-lawsuit-against-unitedhealth-ai-claim-denials-advances — “A STAT investigation, which was cited in the lawsuit, suggests UnitedHealth pressured employees to use the algorithm to issue payment denials to those on Medicare Advantage plans, setting a goal for employees to keep patient rehabilitation stays within 1% of the length of stay predicted by nH Predict.”

- [5]HH Law. “The Lokken v. UHC Discovery Battle and What It Means for Insurers Using AI.” hh-law.com/blogs/insurance-coverage-and-bad-faith/the-lokken-v-uhc-discovery-battle-and-what-it-means-for-insurers-using-ai/ — “Plaintiffs allege that nH Predict is used systematically and without proper oversight, but UnitedHealth counters that such claims are speculative, asserting that the AI is not always used and does not make final coverage decisions.”

- [6]LeverageAI. “Nightly AI Decision Builds.” leverageai.com.au/wp-content/media/Nightly_AI_Decision_Builds.html — “The nH Predict algorithm had a 90% error rate on appeals — 9 out of 10 times a human reviewed the AI’s denial, they overturned it.”

- [7]DLA Piper. “Lawsuit over AI usage by Medicare Advantage plans allowed to proceed.” dlapiper.com/en/insights/publications/ai-outlook/2025/lawsuit-over-ai-usage-by-medicare-advantage-plans-allowed-to-proceed (February 2025) — “The US District Court for the District of Minnesota ruled that plaintiffs may proceed in a putative class action lawsuit… the court found that the Medicare Act did not preempt the breach of contract and breach of implied covenant of good faith and fair dealing claims involving the alleged use of AI.”

- [8]McFadden, Patrick. “Decision Intelligence Has 3 Layers. Most Stacks Only Govern Two.” thinkingoperatingsystem.com/decision-intelligence-has-3-layers-most-stacks-only-govern-two (February 2026) — “If none of it is wired into a pre-execution authority gate, then at runtime the real rule is: ‘If the tool allows it and no one intervenes, it goes.’ From a regulator’s perspective, that’s governance in name only.” and “Most organizations are pouring effort into #1 and #3. The next wave of scrutiny — from boards, regulators, and insurers — is going to land squarely on #2.”

- [9]RAGN World. “The 2025 Responsible AI Governance Landscape: From Principles to Practice.” aigl.blog/content/files/2026/02/THE-2025-RESPONSIBLE-AI-GOVERNANCE-LANDSCAPE-FROM-PRINCIPLES-TO-PRACTICE.pdf — “Financial services discovered that model-agnostic explainability methods (SHAP, LIME) often provide insufficient detail for regulatory requirements, driving investment in inherently interpretable models.”

- [10]Nitor Infotech. “Explainable AI in 2025: Navigating Trust and Agency in a Dynamic Landscape.” nitorinfotech.com/blog/explainable-ai-in-2025-navigating-trust-and-agency-in-a-dynamic-landscape/ — “As Meta’s researchers demonstrated in their 2023 study ‘Beyond Post-hoc Explanations,’ these techniques explained less than 40% of model behavior for complex decisions.”

- [11]Bank for International Settlements, Financial Stability Institute. “Managing Explanations: How Regulators Can Address AI Explainability.” bis.org/fsi/fsipapers24.pdf (2025) — “IIF-EY (2025) indicates that the top issue discussed between regulators and firms in relation to AI is explainability/the black box nature of certain AI algorithms.”

- [12]Forbes. “40% Of Workflows Will Run On Agentic AI. Where’s The Identity?” forbes.com/sites/digital-assets/2026/02/13/40-of-workflows-will-run-on-agentic-ai-wheres-the-identity/ (February 2026) — “Gartner projects that by 2026, over 40% of enterprise workflows will involve autonomous agents.”

- [13]Coletta, Giovanni. “Agentic AI Governance Frameworks 2026: Risks, Oversight, and Emerging Standards.” medium.com/@giovannicoletta/agentic-ai-governance-frameworks-2026-risks-oversight-and-emerging-standards-c15bf2e6efca (February 2026) — “Singapore’s Infocomm Media Development Authority published the world’s first Agentic AI governance framework… the (voluntary) framework acknowledges that the agents’ ‘access to sensitive data and ability to make changes to their environment’ raises a whole new profile of risks.”

- [14]Coletta, Giovanni. “Agentic AI Governance Frameworks 2026: Risks, Oversight, and Emerging Standards.” medium.com/@giovannicoletta/agentic-ai-governance-frameworks-2026-risks-oversight-and-emerging-standards-c15bf2e6efca (February 2026) — “UC Berkeley’s Center for Long-Term Cybersecurity published the Agentic AI Risk-Management Standards Profile… unique challenges ‘complicate traditional, model-centric risk-management approaches and demand system-level governance’.”

- [15]IBM. “The Evolution of Zero Trust and the Frameworks that Guide It.” ibm.com/think/insights/the-evolution-of-zero-trust-and-the-frameworks-that-guide-it — “Zero trust began in the ‘BeyondCorp’ initiative developed by Google in 2010… In 2014, Forrester Research analyst John Kindervag coined the concept zero trust to describe this new security paradigm in a report titled ‘The Zero Trust Model of Information Security.’ He proposed a new security model that assumes no one — whether inside or outside the organization’s network — can be trusted without verification.”

- [16]NIST Blog. “Zero Trust Cybersecurity: ‘Never Trust, Always Verify’.” nist.gov/blogs/taking-measure/zero-trust-cybersecurity-never-trust-always-verify — “In 2010, cybersecurity expert John Kindervag coined the phrase ‘zero trust’… zero trust assumes that the system will be breached and designs security as if there is no perimeter. Hence, don’t trust anything by default.” Also: NIST SP 800-207, csrc.nist.gov/pubs/sp/800/207/final

- [17]LeverageAI. “SiloOS.” leverageai.com.au/wp-content/media/SiloOS.html — “Policy says: ‘You shouldn’t do X’ (detection after the fact). Architecture says: ‘You can’t do X’ (prevention by design). Governance embedded in infrastructure doesn’t need checklists. The system is the compliance.”

- [18]Federal Reserve. “SR 11-7: Guidance on Model Risk Management.” federalreserve.gov/supervisionreg/srletters/sr1107.htm (April 4, 2011) — “Banking organizations should be attentive to the possible adverse consequences (including financial loss) of decisions based on models that are incorrect or misused, and should address those consequences through active model risk management.”

- [19]ArticleSledge. “Model Risk Management Guide 2025.” articsledge.com/post/model-risk-management — “SR 11-7 requires banks to have three main things: 1. Robust Model Development… 2. Effective Validation… 3. Sound Governance… Only 44% of banks properly validate their artificial intelligence tools, creating massive blind spots.”

- [20]EU AI Act. “Article 14: Human Oversight.” artificialintelligenceact.eu/article/14/ (Entry into force: 2 August 2026) — “High-risk AI systems shall be designed and developed in such a way, including with appropriate human-machine interface tools, that they can be effectively overseen by natural persons during the period in which they are in use… measures identified and built, when technically feasible, into the high-risk AI system by the provider before it is placed on the market.”

- [21]EU AI Act. “Article 12: Record-Keeping.” artificialintelligenceact.eu/article/12/ (Entry into force: 2 August 2026) — “High-risk AI systems shall technically allow for the automatic recording of events (logs) over the lifetime of the system.”

- [22]EU AI Act. “Article 17: Quality Management System.” artificialintelligenceact.eu/article/17/ (Entry into force: 2 August 2026) — “Providers of high-risk AI systems shall put a quality management system in place that ensures compliance with this Regulation. That system shall be documented in a systematic and orderly manner in the form of written policies, procedures and instructions.”

- [23]LeverageAI. “Nightly AI Decision Builds.” leverageai.com.au/wp-content/media/Nightly_AI_Decision_Builds.html — “AI Act enforcement begins August 2026 requiring machine-readable marking of AI-generated content; penalties reach €15M or 3% of worldwide turnover.”

- [24]RAGN World. “The 2025 Responsible AI Governance Landscape: From Principles to Practice.” aigl.blog/content/files/2026/02/THE-2025-RESPONSIBLE-AI-GOVERNANCE-LANDSCAPE-FROM-PRINCIPLES-TO-PRACTICE.pdf — “The First Major EU AI Act Enforcement Action: Likely target: High-risk system in hiring or credit. Expected fine: €10-€30 million range. Likely impact: Immediate compliance acceleration across similar systems globally.”

- [25]Redwood. “AI And Automation Trends 2026: From Efficiency To Enterprise.” redwood.com/article/ai-automation-trends/ (2026) — “In 2026, effective AI governance will look much more like an operating model. This means clearly defined boundaries for autonomous action, explicit escalation paths for human oversight and transparent validation of AI models and decisions.”

- [26]Ethyca. “Governing Enterprise Data & AI with Policy-as-Code.” ethyca.com/news/how-to-govern-data-and-ai-with-a-policy-as-code-approach (September 2025) — “Implementing Policy-as-Code requires technical infrastructure that translates governance requirements into executable constraints… Advanced platforms implement governance checks in parallel with inference requests, ensuring sub-millisecond latency impact.”

- [27]LeverageAI. “Architecture, Not Vibes.” leverageai.com.au/wp-content/media/AI_Doesnt_Fear_Death_You_Need_Architecture_Not_Vibes_For_Trust_ebook.html — “AWS AgentCore: deterministic policy enforcement at the gateway. The AI literally cannot exceed its scope.” Also: AWS AgentCore Policy documentation, docs.aws.amazon.com/bedrock/latest/userguide/agentcore-policy.html

Discover more from Leverage AI for your business

Subscribe to get the latest posts sent to your email.

Previous Post

The Unverified Conversation: Why LLMs Can't Trust Their Own History

Next Post

Stop Asking AI Why It Decided — Build Decisions That Carry Their Own Proof